Lecture 7 - Lecture 7 - April 22, 2019 April 22, 2019 1 - PowerPoint PPT Presentation

Fei-Fei Li & Justin Johnson & Serena Yeung Fei-Fei Li & Justin Johnson & Serena Yeung Lecture 7 - Lecture 7 - April 22, 2019 April 22, 2019 1 Administrative: Project Proposal Due tomorrow, 4/24 on GradeScope 1 person per

Fei-Fei Li & Justin Johnson & Serena Yeung Fei-Fei Li & Justin Johnson & Serena Yeung Lecture 7 - Lecture 7 - April 22, 2019 April 22, 2019 1

Administrative: Project Proposal Due tomorrow, 4/24 on GradeScope 1 person per group needs to submit, but tag all group members Fei-Fei Li & Justin Johnson & Serena Yeung Fei-Fei Li & Justin Johnson & Serena Yeung Lecture 7 - Lecture 7 - April 22, 2019 April 22, 2019 2

Administrative: Alternate Midterm See Piazza for form to request alternate midterm time or other midterm accommodations Alternate midterm requests due Thursday! Fei-Fei Li & Justin Johnson & Serena Yeung Fei-Fei Li & Justin Johnson & Serena Yeung Lecture 7 - Lecture 7 - April 22, 2019 April 22, 2019 3

Administrative: A2 A2 is out, due Wednesday 5/1 We recommend using Google Cloud for the assignment, especially if your local machine uses Windows Fei-Fei Li & Justin Johnson & Serena Yeung Fei-Fei Li & Justin Johnson & Serena Yeung Lecture 7 - Lecture 7 - April 22, 2019 April 22, 2019 4

Where we are now... Computational graphs x s (scores) * hinge L + loss W R Fei-Fei Li & Justin Johnson & Serena Yeung Lecture 7 - April 22, 2019 5

Where we are now... Neural Networks Linear score function: 2-layer Neural Network x h s W1 W2 10 3072 100 Fei-Fei Li & Justin Johnson & Serena Yeung Fei-Fei Li & Justin Johnson & Serena Yeung Lecture 7 - Lecture 7 - April 22, 2019 April 22, 2019 6

Where we are now... Convolutional Neural Networks Illustration of LeCun et al. 1998 from CS231n 2017 Lecture 1 Fei-Fei Li & Justin Johnson & Serena Yeung Lecture 7 - April 22, 2019 7

Where we are now... Convolutional Layer activation map 32x32x3 image 5x5x3 filter 32 28 convolve (slide) over all spatial locations 28 32 3 1 Fei-Fei Li & Justin Johnson & Serena Yeung Lecture 7 - April 22, 2019 8

Where we are now... For example, if we had 6 5x5 filters, we’ll get 6 separate activation maps: Convolutional Layer activation maps 32 28 Convolution Layer 28 32 3 6 We stack these up to get a “new image” of size 28x28x6! Fei-Fei Li & Justin Johnson & Serena Yeung Lecture 7 - April 22, 2019 9

Where we are now... Learning network parameters through optimization Landscape image is CC0 1.0 public domain Walking man image is CC0 1.0 public domain Fei-Fei Li & Justin Johnson & Serena Yeung Lecture 7 - April 22, 2019 10

Where we are now... Mini-batch SGD Loop: 1. Sample a batch of data 2. Forward prop it through the graph (network), get loss 3. Backprop to calculate the gradients 4. Update the parameters using the gradient Fei-Fei Li & Justin Johnson & Serena Yeung Lecture 7 - April 22, 2019 11

Where we are now... Hardware + Software PyTorch TensorFlow Fei-Fei Li & Justin Johnson & Serena Yeung Lecture 7 - April 22, 2019 12

Next: Training Neural Networks Fei-Fei Li & Justin Johnson & Serena Yeung Fei-Fei Li & Justin Johnson & Serena Yeung Lecture 7 - Lecture 7 - April 22, 2019 April 22, 2019 13

Overview 1. One time setup activation functions, preprocessing, weight initialization, regularization, gradient checking 2. Training dynamics babysitting the learning process, parameter updates, hyperparameter optimization 3. Evaluation model ensembles, test-time augmentation Fei-Fei Li & Justin Johnson & Serena Yeung Fei-Fei Li & Justin Johnson & Serena Yeung Lecture 7 - Lecture 7 - April 22, 2019 April 22, 2019 14

Part 1 - Activation Functions - Data Preprocessing - Weight Initialization - Batch Normalization - Babysitting the Learning Process - Hyperparameter Optimization Fei-Fei Li & Justin Johnson & Serena Yeung Fei-Fei Li & Justin Johnson & Serena Yeung Lecture 7 - Lecture 7 - April 22, 2019 April 22, 2019 15

Activation Functions Fei-Fei Li & Justin Johnson & Serena Yeung Fei-Fei Li & Justin Johnson & Serena Yeung Lecture 7 - Lecture 7 - April 22, 2019 April 22, 2019 16

Activation Functions Fei-Fei Li & Justin Johnson & Serena Yeung Fei-Fei Li & Justin Johnson & Serena Yeung Lecture 7 - Lecture 7 - April 22, 2019 April 22, 2019 17

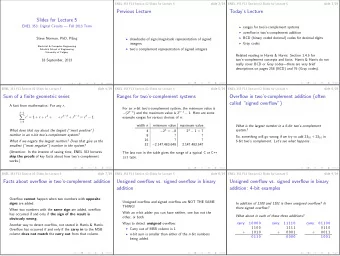

Activation Functions Leaky ReLU Sigmoid tanh Maxout ELU ReLU Fei-Fei Li & Justin Johnson & Serena Yeung Fei-Fei Li & Justin Johnson & Serena Yeung Lecture 7 - Lecture 7 - April 22, 2019 April 22, 2019 18

Activation Functions - Squashes numbers to range [0,1] - Historically popular since they have nice interpretation as a saturating “firing rate” of a neuron Sigmoid Fei-Fei Li & Justin Johnson & Serena Yeung Fei-Fei Li & Justin Johnson & Serena Yeung Lecture 7 - Lecture 7 - April 22, 2019 April 22, 2019 19

Activation Functions - Squashes numbers to range [0,1] - Historically popular since they have nice interpretation as a saturating “firing rate” of a neuron 3 problems: 1. Saturated neurons “kill” the Sigmoid gradients Fei-Fei Li & Justin Johnson & Serena Yeung Fei-Fei Li & Justin Johnson & Serena Yeung Lecture 7 - Lecture 7 - April 22, 2019 April 22, 2019 20

x sigmoid gate What happens when x = -10? What happens when x = 0? What happens when x = 10? Fei-Fei Li & Justin Johnson & Serena Yeung Fei-Fei Li & Justin Johnson & Serena Yeung Lecture 7 - Lecture 7 - April 22, 2019 April 22, 2019 21

Activation Functions - Squashes numbers to range [0,1] - Historically popular since they have nice interpretation as a saturating “firing rate” of a neuron 3 problems: 1. Saturated neurons “kill” the Sigmoid gradients 2. Sigmoid outputs are not zero-centered Fei-Fei Li & Justin Johnson & Serena Yeung Fei-Fei Li & Justin Johnson & Serena Yeung Lecture 7 - Lecture 7 - April 22, 2019 April 22, 2019 22

Consider what happens when the input to a neuron is always positive... What can we say about the gradients on w ? Fei-Fei Li & Justin Johnson & Serena Yeung Fei-Fei Li & Justin Johnson & Serena Yeung Lecture 7 - Lecture 7 - April 22, 2019 April 22, 2019 23

Consider what happens when the input to a neuron is always positive... allowed gradient update directions zig zag path allowed gradient update directions hypothetical What can we say about the gradients on w ? optimal w vector Always all positive or all negative :( Fei-Fei Li & Justin Johnson & Serena Yeung Fei-Fei Li & Justin Johnson & Serena Yeung Lecture 7 - Lecture 7 - April 22, 2019 April 22, 2019 24

Consider what happens when the input to a neuron is always positive... allowed gradient update directions zig zag path allowed gradient update directions hypothetical What can we say about the gradients on w ? optimal w vector Always all positive or all negative :( (For a single element! Minibatches help) Fei-Fei Li & Justin Johnson & Serena Yeung Fei-Fei Li & Justin Johnson & Serena Yeung Lecture 7 - Lecture 7 - April 22, 2019 April 22, 2019 25

Activation Functions - Squashes numbers to range [0,1] - Historically popular since they have nice interpretation as a saturating “firing rate” of a neuron 3 problems: 1. Saturated neurons “kill” the Sigmoid gradients 2. Sigmoid outputs are not zero-centered 3. exp() is a bit compute expensive Fei-Fei Li & Justin Johnson & Serena Yeung Fei-Fei Li & Justin Johnson & Serena Yeung Lecture 7 - Lecture 7 - April 22, 2019 April 22, 2019 26

Activation Functions - Squashes numbers to range [-1,1] - zero centered (nice) - still kills gradients when saturated :( tanh(x) [LeCun et al., 1991] Fei-Fei Li & Justin Johnson & Serena Yeung Fei-Fei Li & Justin Johnson & Serena Yeung Lecture 7 - Lecture 7 - April 22, 2019 April 22, 2019 27

- Computes f(x) = max(0,x) Activation Functions - Does not saturate (in +region) - Very computationally efficient - Converges much faster than sigmoid/tanh in practice (e.g. 6x) ReLU (Rectified Linear Unit) [Krizhevsky et al., 2012] Fei-Fei Li & Justin Johnson & Serena Yeung Fei-Fei Li & Justin Johnson & Serena Yeung Lecture 7 - Lecture 7 - April 22, 2019 April 22, 2019 28

- Computes f(x) = max(0,x) Activation Functions - Does not saturate (in +region) - Very computationally efficient - Converges much faster than sigmoid/tanh in practice (e.g. 6x) - Not zero-centered output ReLU (Rectified Linear Unit) Fei-Fei Li & Justin Johnson & Serena Yeung Fei-Fei Li & Justin Johnson & Serena Yeung Lecture 7 - Lecture 7 - April 22, 2019 April 22, 2019 29

- Computes f(x) = max(0,x) Activation Functions - Does not saturate (in +region) - Very computationally efficient - Converges much faster than sigmoid/tanh in practice (e.g. 6x) - Not zero-centered output - An annoyance: ReLU (Rectified Linear Unit) hint: what is the gradient when x < 0? Fei-Fei Li & Justin Johnson & Serena Yeung Fei-Fei Li & Justin Johnson & Serena Yeung Lecture 7 - Lecture 7 - April 22, 2019 April 22, 2019 30

Recommend

More recommend

Explore More Topics

Stay informed with curated content and fresh updates.