Autocorrelation When a regression model is fitted to time series - PowerPoint PPT Presentation

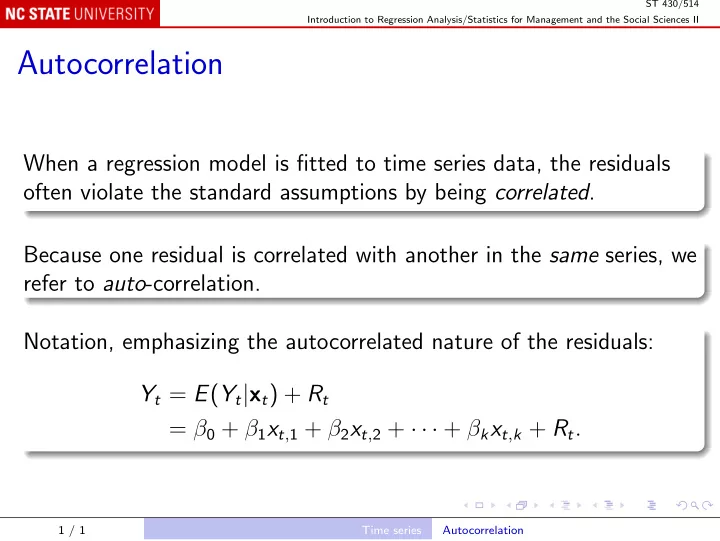

ST 430/514 Introduction to Regression Analysis/Statistics for Management and the Social Sciences II Autocorrelation When a regression model is fitted to time series data, the residuals often violate the standard assumptions by being correlated .

ST 430/514 Introduction to Regression Analysis/Statistics for Management and the Social Sciences II Autocorrelation When a regression model is fitted to time series data, the residuals often violate the standard assumptions by being correlated . Because one residual is correlated with another in the same series, we refer to auto -correlation. Notation, emphasizing the autocorrelated nature of the residuals: Y t = E ( Y t | x t ) + R t = β 0 + β 1 x t , 1 + β 2 x t , 2 + · · · + β k x t , k + R t . 1 / 1 Time series Autocorrelation

ST 430/514 Introduction to Regression Analysis/Statistics for Management and the Social Sciences II The correlation of R t with R t − 1 is called a lagged correlation, specifically the lag 1 correlation. More generally, the correlation of R t with R t − m is the lag m correlation, m > 0. Autocorrelation is usually strongest for small lags and decays to zero for large lags. Autocorrelation depends on the lag m , but often does not depend on t . 2 / 1 Time series Autocorrelation

ST 430/514 Introduction to Regression Analysis/Statistics for Management and the Social Sciences II When the correlation of R t with R t − m does not depend on t , and in addition: the expected value E ( R t ) is constant; the variance var( R t ) is constant; the residuals are said to be stationary . Regression residuals have zero expected value, hence constant expected value, if the model is correctly specified. They also have constant variance, perhaps after the data have been transformed appropriately, or if weighted least squares has been used. 3 / 1 Time series Autocorrelation

ST 430/514 Introduction to Regression Analysis/Statistics for Management and the Social Sciences II Correlogram The graph of corr( R t , R t − m ) against lag m is the correlogram, or auto-correlation function (ACF). For the SALES35 example: sales <- read.table("Text/Exercises&Examples/SALES35.txt", header = TRUE) l <- lm(SALES ~ T, sales) acf(residuals(l)) The ACF is usually plotted including the lag 0 correlation, which is of course exactly 1. The blue lines indicate significance at α = . 05. 4 / 1 Time series Autocorrelation

ST 430/514 Introduction to Regression Analysis/Statistics for Management and the Social Sciences II Modeling Autocorrelation Sometimes the ACF appears to decay exponentially: ACF( m ) ≈ φ m , m ≥ 0 , − 1 < φ < 1 . The 1 st order autoregressive model, AR(1), has this property; if R t = φ R t − 1 + ǫ t , where ǫ t has constant variance and is uncorrelated with ǫ t − m for all m > 0, then corr( R t , R t − m ) = φ m , m > 0 . 5 / 1 Time series Autocorrelation

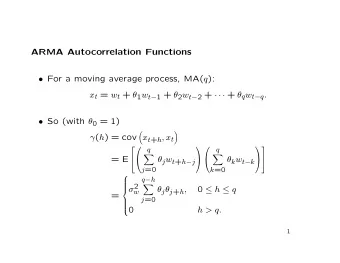

ST 430/514 Introduction to Regression Analysis/Statistics for Management and the Social Sciences II General autoregressive model, AR( p ): R t = φ 1 R t − 1 + φ 2 R t − 2 + · · · + φ p R t − p + ǫ t . ACF decays exponentially, but not exactly as φ m for any φ . Moving average models, MA( q ): R t = ǫ t + θ 1 ǫ t − 1 + θ 2 ǫ t − 2 + · · · + θ q ǫ t − q . ACF is zero for lag m > q . 6 / 1 Time series Autocorrelation

ST 430/514 Introduction to Regression Analysis/Statistics for Management and the Social Sciences II Combined ARMA( p , q ) model: R t = φ 1 R t − 1 + φ 2 R t − 2 + · · · + φ p R t − p + ǫ t + θ 1 ǫ t − 1 + θ 2 ǫ t − 2 + · · · + θ q ǫ t − q . ACF again decays exponentially, but not exactly as φ m for any φ . 7 / 1 Time series Autocorrelation

ST 430/514 Introduction to Regression Analysis/Statistics for Management and the Social Sciences II Regression with Autocorrelated Errors To fit the regression model Y t = E ( Y t | x t ) + R t we must specify both the regression part E ( Y t | x t ) = β 0 + β 1 x t , 1 + β 2 x t , 2 + · · · + β k x t , k and a model for the autocorrelation of R t , say ARMA( p , q ) for specified p and q . Time series software fits both submodels simultaneously. 8 / 1 Time series Autocorrelation

ST 430/514 Introduction to Regression Analysis/Statistics for Management and the Social Sciences II In SAS: proc autoreg can handle AR( p ) errors, but not ARMA( p , q ) for q > 0. proc arima can handle ARMA( p , q ). In R, try AR(1) errors: ar1 <- arima(sales$SALES, order = c(1, 0, 0), xreg = sales$T) print(ar1) tsdiag(ar1) The ARMA( p , q ) model is specified by order = c(p, 0, q) . The middle part of the order is d , for differencing. 9 / 1 Time series Autocorrelation

ST 430/514 Introduction to Regression Analysis/Statistics for Management and the Social Sciences II Note that ˆ φ , the ar1 coefficient, is significantly different from zero. Note that the trend (coefficient of T ) is similar to the original regression: 4.2959 versus 4.2956. Its reported standard error is higher: 0.1760 versus 0.1069. The original, smaller, standard error is not credible, because it was calculated on the assumption that the residuals are not correlated. The new standard error recognizes autocorrelation, and is more credible, but is still calculated on an assumption: that the residuals are AR(1). 10 / 1 Time series Autocorrelation

ST 430/514 Introduction to Regression Analysis/Statistics for Management and the Social Sciences II Which model to use? ACF of sales residuals decays something like an exponential, but is also not significantly different from zero for m > 1, so it could be MA(1). ma1 <- arima(sales$SALES, order = c(0, 0, 1), xreg = sales$T) print(ma1) tsdiag(ma1) ˆ θ 1 , the ma1 coefficient, is also significantly different from zero. But AIC is higher than for AR(1), so AR(1) is preferred. 11 / 1 Time series Autocorrelation

ST 430/514 Introduction to Regression Analysis/Statistics for Management and the Social Sciences II Be systematic; use BIC to find a good model: P <- Q <- 0:3 BICtable <- matrix(NA, length(P), length(Q)) dimnames(BICtable) <- list(paste("p:", P), paste("q:", Q)) for (p in P) for (q in Q) { apq <- arima(sales$SALES, order = c(p, 0, q), xreg = sales$T) BICtable[p+1, q+1] <- AIC(apq, k = log(nrow(sales))) } BICtable As usual, use k = log(nrow(...)) to get BIC instead of AIC. As in stepwise regression, minimizing AIC tends to overfit. AR(1) gives the minimum BIC out of these choices. 12 / 1 Time series Autocorrelation

ST 430/514 Introduction to Regression Analysis/Statistics for Management and the Social Sciences II Forecasting with(sales, plot(T, SALES, xlim = c(0, 40), ylim = c(0, 200))) ar1Fit <- coefficients(ar1)["intercept"] + coefficients(ar1)["sales$T"] * sales$T lines(sales$T, ar1Fit, lty = 2) newTimes <- 36:40 p <- predict(ar1, n.ahead = length(newTimes), newxreg = newTimes) pCL <- p$se * qnorm(.975) matlines(newTimes, p$pred + cbind(0, -pCL, pCL), col = c("red", "blue", "blue"), lty = c(2, 3, 3)) 13 / 1 Time series Autocorrelation

Recommend

More recommend

Explore More Topics

Stay informed with curated content and fresh updates.

![[ ] ( ) ( ) t. t. = Y t T X t , , ( ) ( ) ( ) ( ) = Y t](https://c.sambuz.com/734029/t-t-y-t-t-x-t-y-t-y-s.webp)