1

- 1

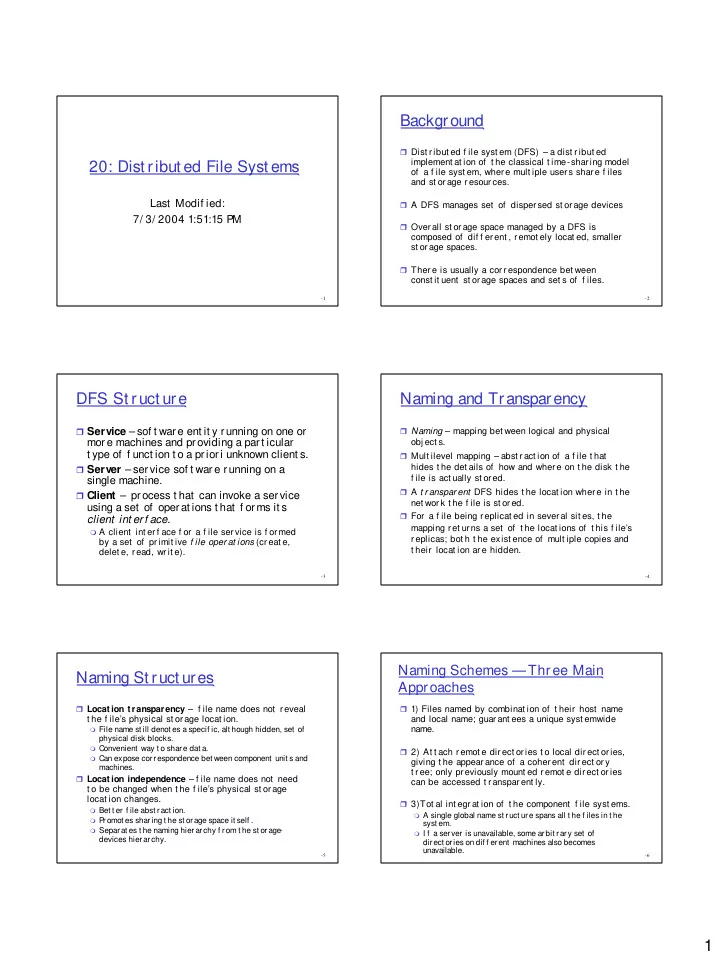

20: Dist ribut ed File Syst ems

Last Modif ied: 7/ 3/ 2004 1:51:15 PM

- 2

Background

Dist r ibut ed f ile syst em (DFS) – a dist r ibut ed

implement at ion of t he classical t ime-shar ing model

- f a f ile syst em, wher e mult iple user s shar e f iles

and st or age r esour ces.

A DFS manages set of disper sed st or age devices Over all st or age space managed by a DFS is

composed of dif f er ent , r emot ely locat ed, smaller st or age spaces.

Ther e is usually a cor r espondence bet ween

const it uent st or age spaces and set s of f iles.

- 3

DFS St ruct ure

Service – sof t ware ent it y running on one or

more machines and providing a part icular t ype of f unct ion t o a priori unknown client s.

Server – ser vice sof t war e r unning on a

single machine.

Client – process t hat can invoke a service

using a set of operat ions t hat f orms it s client int erf ace.

A client int er f ace f or a f ile ser vice is f or med

by a set of pr imit ive f ile oper at ions (cr eat e, delet e, read, writ e).

- 4

Naming and Transparency

Naming – mapping bet ween logical and physical

- bj ect s.

Mult ilevel mapping – abst ract ion of a f ile t hat

hides t he det ails of how and wher e on t he disk t he f ile is act ually st ored.

A t r anspar ent DFS hides t he locat ion wher e in t he

net wor k t he f ile is st or ed.

For a f ile being r eplicat ed in sever al sit es, t he

mapping r et ur ns a set of t he locat ions of t his f ile’s r eplicas; bot h t he exist ence of mult iple copies and t heir locat ion ar e hidden.

- 5

Naming St ruct ures

Locat ion t ransparency – f ile name does not r eveal

t he f ile’s physical st or age locat ion.

File name st ill denot es a specif ic, alt hough hidden, set of

physical disk blocks.

Convenient way t o share dat a. Can expose correspondence bet ween component unit s and

machines. Locat ion independence – f ile name does not need

t o be changed when t he f ile’s physical st or age locat ion changes.

Bet t er f ile abst ract ion. P

romot es sharing t he st orage space it self .

Separat es t he naming hierarchy f rom t he st orage-

devices hierarchy.

- 6

Naming Schemes —Thr ee Main Approaches

1) Files named by combinat ion of t heir host name

and local name; guar ant ees a unique syst emwide name.

2) At t ach r emot e dir ect or ies t o local dir ect or ies,

giving t he appear ance of a coher ent dir ect or y t r ee; only pr eviously mount ed r emot e dir ect or ies can be accessed t r anspar ent ly.

3)Tot al int egr at ion of t he component f ile syst ems.

A single global name st ruct ure spans all t he f iles in t he

syst em.

I f a server is unavailable, some arbit rary set of