7. Two Random Variables In many experiments, the observations are - PowerPoint PPT Presentation

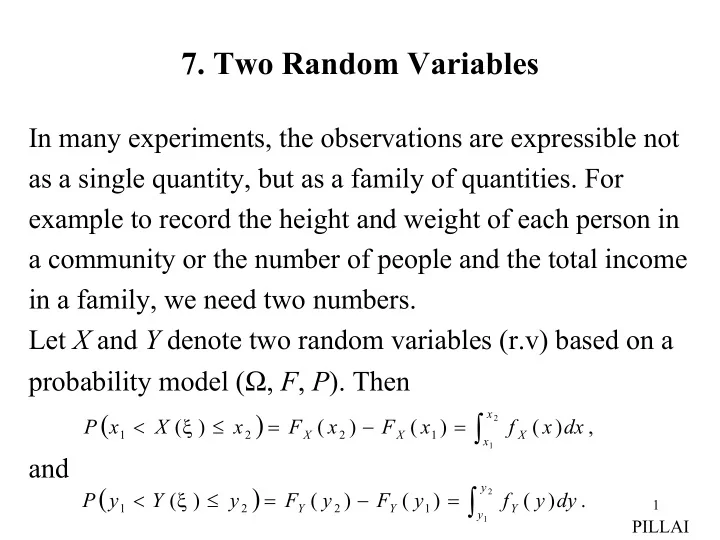

7. Two Random Variables In many experiments, the observations are expressible not as a single quantity, but as a family of quantities. For example to record the height and weight of each person in a community or the number of people and the

7. Two Random Variables In many experiments, the observations are expressible not as a single quantity, but as a family of quantities. For example to record the height and weight of each person in a community or the number of people and the total income in a family, we need two numbers. Let X and Y denote two random variables (r.v) based on a probability model ( Ω , F , P ). Then ( ) x ∫ < ξ ≤ = − = 2 P x X ( ) x F ( x ) F ( x ) f ( x ) dx , 1 2 X 2 X 1 X x 1 and ( ) y ∫ < ξ ≤ = − = 2 P y Y ( ) y F ( y ) F ( y ) f ( y ) dy . 1 1 2 Y 2 Y 1 Y y 1 PILLAI

What about the probability that the pair of r.vs ( X , Y ) belongs to an arbitrary region D ? In other words, how does one [ ] estimate, for example, < ξ ≤ ∩ < ξ ≤ = P ( x X ( ) x ) ( y Y ( ) y ) ? 1 2 1 2 Towards this, we define the joint probability distribution function of X and Y to be [ ] = ξ ≤ ∩ ξ ≤ F XY ( x , y ) P ( X ( ) x ) ( Y ( ) y ) = ≤ ≤ ≥ P ( X x , Y y ) 0 , (7-1) where x and y are arbitrary real numbers. Properties (i) −∞ = −∞ = +∞ +∞ = (7-2) F ( , y ) F ( x , ) 0 , F ( , ) 1 . XY XY XY ( ) ( ) , since we get ξ ≤ −∞ ξ ≤ ⊂ ξ ≤ −∞ X ( ) , Y ( ) y X ( ) 2 PILLAI

( ) ( ) Similarly −∞ ≤ ξ ≤ −∞ = ξ ≤ +∞ ξ ≤ +∞ = Ω F XY ( , y ) P X ( ) 0 . X ( ) , Y ( ) , we get ∞ ∞ = Ω = F XY ( , ) P ( ) 1 . ( ) (ii) < ξ ≤ ξ ≤ = − P x X ( ) x , Y ( ) y F ( x , y ) F ( x , y ). (7-3) 1 2 XY 2 XY 1 ( ) ξ ≤ < ξ ≤ = − (7-4) P X ( ) x , y Y ( ) y F ( x , y ) F ( x , y ). 1 2 XY 2 XY 1 To prove (7-3), we note that for x > x , 2 1 ( ) ( ) ( ) ξ ≤ ξ ≤ = ξ ≤ ξ ≤ ∪ < ξ ≤ ξ ≤ X ( ) x , Y ( ) y X ( ) x , Y ( ) y x X ( ) x , Y ( ) y 2 1 1 2 and the mutually exclusive property of the events on the right side gives ( ) ( ) ( ) ξ ≤ ξ ≤ = ξ ≤ ξ ≤ + < ξ ≤ ξ ≤ P X ( ) x , Y ( ) y P X ( ) x , Y ( ) y P x X ( ) x , Y ( ) y 2 1 1 2 which proves (7-3). Similarly (7-4) follows. 3 PILLAI

( ) (iii) < ξ ≤ < ξ ≤ = − P x X ( ) x , y Y ( ) y F ( x , y ) F ( x , y ) 1 2 1 2 XY 2 2 XY 2 1 (7-5) − + F ( x , y ) F ( x , y ). XY 1 2 XY 1 1 This is the probability that ( X , Y ) belongs to the rectangle R 0 in Fig. 7.1. To prove (7-5), we can make use of the following identity involving mutually exclusive events on the right side. ( ) ( ) ( ) . < ξ ≤ ξ ≤ = < ξ ≤ ξ ≤ ∪ < ξ ≤ < ξ ≤ x X ( ) x , Y ( ) y x X ( ) x , Y ( ) y x X ( ) x , y Y ( ) y 1 2 2 1 2 1 1 2 1 2 Y y 2 R 0 y 1 X x x 1 2 4 Fig. 7.1 PILLAI

This gives ( ) ( ) ( ) < ξ ≤ ξ ≤ = < ξ ≤ ξ ≤ + < ξ ≤ < ξ ≤ P x X ( ) x , Y ( ) y P x X ( ) x , Y ( ) y P x X ( ) x , y Y ( ) y 1 2 2 1 2 1 1 2 1 2 and the desired result in (7-5) follows by making use of (7- y = y 3) with and respectively. y 2 1 Joint probability density function (Joint p.d.f) By definition, the joint p.d.f of X and Y is given by ∂ 2 F ( x , y ) (7-6) = f ( x , y ) XY . XY ∂ ∂ x y and hence we obtain the useful formula x y ∫ ∫ = (7-7) F ( x , y ) f ( u , v ) dudv . XY XY − ∞ − ∞ Using (7-2), we also get + ∞ + ∞ ∫ ∫ (7-8) = f XY ( x , y ) dxdy 1 . 5 − ∞ − ∞ PILLAI

To find the probability that ( X , Y ) belongs to an arbitrary region D , we can make use of (7-5) and (7-7). From (7-5) and (7-7) ( ) < ξ ≤ + ∆ < ξ ≤ + ∆ = + ∆ + ∆ P x X ( ) x x , y Y ( ) y y F ( x x , y y ) XY − + ∆ − + ∆ + F ( x , y y ) F ( x x , y ) F ( x , y ) XY XY XY (7-9) + ∆ + ∆ x x y y ∫ ∫ = = ∆ ∆ f ( u , v ) dudv f ( x , y ) x y . XY XY x y Thus the probability that ( X , Y ) belongs to a differential rectangle ∆ x ∆ y equals and repeating this ⋅ ∆ ∆ f XY ( x , y ) x y , procedure over the union of no overlapping differential rectangles in D , we get the useful result Y D ∆ y ∆ x X 6 Fig. 7.2 PILLAI

( ) ∫ ∫ ∈ = P ( X , Y ) D f ( x , y ) dxdy . (7-10) XY ∈ ( x , y ) D (iv) Marginal Statistics In the context of several r.vs, the statistics of each individual ones are called marginal statistics. Thus is the F ( x ) X marginal probability distribution function of X , and is f X ( x ) the marginal p.d.f of X . It is interesting to note that all marginals can be obtained from the joint p.d.f. In fact = +∞ = +∞ (7-11) F ( x ) F ( x , ), F ( y ) F ( , y ) . X XY Y XY Also +∞ +∞ ∫ ∫ = = f ( x ) f ( x , y ) dy , f ( y ) f ( x , y ) dx . (7-12) X XY Y XY − ∞ − ∞ To prove (7-11), we can make use of the identity ≤ = ≤ ∩ ≤ +∞ ( X x ) ( X x ) ( Y ) 7 PILLAI

( ) ( ) so that = ≤ = ≤ ≤ ∞ = +∞ F ( x ) P X x P X x , Y F ( x , ). X XY To prove (7-12), we can make use of (7-7) and (7-11), which gives + ∞ x ∫ ∫ = +∞ = (7-13) F ( x ) F ( x , ) f ( u , y ) dudy X XY XY − ∞ − ∞ and taking derivative with respect to x in (7-13), we get + ∞ ∫ = f ( x ) f ( x , y ) dy . (7-14) X XY − ∞ At this point, it is useful to know the formula for differentiation under integrals. Let b ( x ) ∫ = (7-15) H ( x ) h ( x , y ) dy . a ( x ) Then its derivative with respect to x is given by ∂ dH x ( ) db x ( ) da x ( ) h x y ( , ) ( ) b x ∫ (7-16) = − + h x b ( , ) h x a ( , ) dy . ∂ dx dx dx x ( ) a x Obvious use of (7-16) in (7-13) gives (7-14). 8 PILLAI

∆ If X and Y are discrete r.vs, then represents = = = p P ( X x , Y y ) ij i j their joint p.d.f, and their respective marginal p.d.fs are given by ∑ ∑ (7-17) = = = = = P ( X x ) P ( X x , Y y ) p i i j ij j j and ∑ ∑ (7-18) = = = = = P ( Y y ) P ( X x , Y y ) p j i j ij i i Assuming that is written out in the form of a = = P ( X x , Y y ) i j rectangular array, to obtain from (7-17), one need = P ( X x ), i to add up all entries in the i -th row. ∑ p ij i It used to be a practice for insurance p p � p � p 11 12 1 j 1 n p p � p � p companies routinely to scribble out 21 22 2 j 2 n � � � � � � ∑ these sum values in the left and top p p p � p � p ij i 1 i 2 ij in j � � � � � � margins, thus suggesting the name p p � p � p m 1 m 2 mj mn marginal densities! (Fig 7.3). 9 Fig. 7.3 PILLAI

From (7-11) and (7-12), the joint P.D.F and/or the joint p.d.f represent complete information about the r.vs, and their marginal p.d.fs can be evaluated from the joint p.d.f. However, given marginals, (most often) it will not be possible to compute the joint p.d.f. Consider the following example: Y Example 7.1: Given 1 < < < constant, 0 x y 1 , = (7-19) y f XY ( x , y ) 0, otherwise . X 0 1 Obtain the marginal p.d.fs and f Y ( y ). f X ( x ) Fig. 7.4 Solution: It is given that the joint p.d.f is a constant in f XY ( x , y ) the shaded region in Fig. 7.4. We can use (7-8) to determine that constant c . From (7-8) 1 2 cy c + ∞ + ∞ 1 y 1 ∫ ∫ ∫ ∫ ∫ (7-20) = ⋅ = = = = f ( x , y ) dxdy c dx dy cydy 1 . XY 2 2 − ∞ − ∞ = = = y 0 x 0 y 0 0 10 PILLAI

Thus c = 2. Moreover from (7-14) + ∞ 1 ∫ ∫ (7-21) = = = − < < f ( x ) f ( x , y ) dy 2 dy 2 ( 1 x ), 0 x 1 , X XY − ∞ = y x and similarly + ∞ y ∫ ∫ (7-22) = = = < < f ( y ) f ( x , y ) dx 2 dx 2 y , 0 y 1 . Y XY − ∞ = x 0 Clearly, in this case given and as in (7-21)-(7-22), f Y ( y ) f X ( x ) it will not be possible to obtain the original joint p.d.f in (7- 19). Example 7.2: X and Y are said to be jointly normal (Gaussian) distributed, if their joint p.d.f has the following form: 2 2 − − µ ρ − µ − µ − µ 1 ( x ) 2 ( x )( y ) ( y ) X − X Y + Y 1 2 2 σ σ 2 − ρ σ σ = 2 ( 1 ) f ( x , y ) e , X X Y Y XY πσ σ − ρ 2 2 1 (7-23) X Y − ∞ < < +∞ − ∞ < < +∞ ρ < x , y , | | 1 . 11 PILLAI

Recommend

More recommend

Explore More Topics

Stay informed with curated content and fresh updates.