I ntroduction to Mobile Robotics Probabilistic Robotics Wolfram - PowerPoint PPT Presentation

I ntroduction to Mobile Robotics Probabilistic Robotics Wolfram Burgard 1 Probabilistic Robotics Key idea: Explicit representation of uncertainty (using the calculus of probability theory) Perception = state estimation Action

I ntroduction to Mobile Robotics Probabilistic Robotics Wolfram Burgard 1

Probabilistic Robotics Key idea: Explicit representation of uncertainty (using the calculus of probability theory) Perception = state estimation Action = utility optimization 2

Axiom s of Probability Theory P (A) denotes probability that proposition A is true. 3

A Closer Look at Axiom 3 True A A ∧ B B B 4

Using the Axiom s 5

Discrete Random Variables X denotes a random variable X can take on a countable number of values in {x 1 , x 2 , …, x n } P ( X=x i ) or P ( x i ) is the probability that the random variable X takes on value x i P ( ) is called probability mass function . E.g. 6

Continuous Random Variables X takes on values in the continuum. p ( X=x ) or p ( x ) is a probability density function E.g. p ( x ) x 7

“ Probability Sum s up to One ” Discrete case Continuous case 8

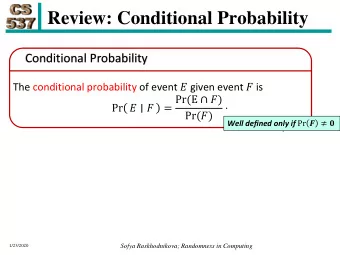

Joint and Conditional Probability P ( X=x and Y=y ) = P ( x,y ) If X and Y are independent then P ( x,y ) = P ( x ) P ( y ) P ( x | y ) is the probability of x given y P ( x | y ) = P ( x,y ) / P ( y ) P ( x,y ) = P ( x | y ) P ( y ) If X and Y are independent then P ( x | y ) = P ( x ) 9

Law of Total Probability Discrete case Continuous case 10

Marginalization Discrete case Continuous case 11

Bayes Form ula 12

Norm alization Algorithm : 13

Bayes Rule w ith Background Know ledge 14

Conditional I ndependence = P ( x , y z ) P ( x | z ) P ( y | z ) = P ( x z ) P ( x | z , y ) Equivalent to = P ( y z ) P ( y | z , x ) and But this does not necessarily mean = P ( x , y ) P ( x ) P ( y ) (independence/ marginal independence) 15

Sim ple Exam ple of State Estim ation Suppose a robot obtains measurement z What is P ( open | z ) ? 16

Causal vs. Diagnostic Reasoning P ( open|z ) is diagnostic P ( z|open ) is causal In some situations, causal knowledge is easier to obtain count frequencies! Bayes rule allows us to use causal knowledge: P ( z | open ) P ( open ) = P ( open | z ) P ( z ) 17

Exam ple P ( z| ¬ open ) = 0.3 P ( z|open ) = 0.6 P ( open ) = P ( ¬ open ) = 0.5 P ( z | open ) P ( open ) = P ( open | z ) + ¬ ¬ P ( z | open ) p ( open ) P ( z | open ) p ( open ) ⋅ 0 . 6 0 . 5 0 . 3 = = = P ( open | z ) 0 . 67 ⋅ + ⋅ + 0 . 6 0 . 5 0 . 3 0 . 5 0 . 3 0 . 15 z raises the probability that the door is open 18

Com bining Evidence Suppose our robot obtains another observation z 2 How can we integrate this new information? More generally, how can we estimate P ( x | z 1 , ..., z n ) ? 19

Recursive Bayesian Updating Markov assum ption: z n is independent of z 1 ,...,z n-1 if we know x 20

Exam ple: Second Measurem ent P ( z 2 | ¬ open ) = 0.3 P ( z 2 |open ) = 0.25 P ( open|z 1 ) =2/3 • z 2 lowers the probability that the door is open 21

Actions Often the world is dynam ic since actions carried out by the robot , actions carried out by other agents , or just the tim e passing by change the world How can we incorporate such actions ? 23

Typical Actions The robot turns its w heels to move The robot uses its m anipulator to grasp an object Plants grow over tim e … Actions are never carried out w ith absolute certainty In contrast to measurements, actions generally increase the uncertainty 24

Modeling Actions To incorporate the outcome of an action u into the current “ belief ” , we use the conditional pdf P ( x | u, x’ ) This term specifies the pdf that executing u changes the state from x’ to x . 25

Exam ple: Closing the door 26

State Transitions P ( x | u, x’ ) for u = “ close door ” : 0.9 0.1 open closed 1 0 If the door is open, the action “close door ” succeeds in 90% of all cases 27

I ntegrating the Outcom e of Actions Continuous case: Discrete case: We will make an independence assumption to get rid of the u in the second factor in the sum. 28

Exam ple: The Resulting Belief 29

Bayes Filters: Fram ew ork Given: Stream of observations z and action data u: Sensor model P ( z | x ) Action model P ( x | u, x’ ) Prior probability of the system state P ( x ) W anted: Estimate of the state X of a dynamical system The posterior of the state is also called Belief : 30

Markov Assum ption Underlying Assum ptions Static world Independent noise Perfect model, no approximation errors 31

z = observation u = action Bayes Filters x = state Bayes Markov Total prob. Markov Markov 32

∫ = η Bayes Filter Algorithm Bel ( x ) P ( z | x ) P ( x | u , x ) Bel ( x ) dx − − − t t t t t t 1 t 1 t 1 Algorithm Bayes_ filter ( Bel(x) , d ): 1. η = 0 2. 3. If d is a perceptual data item z then 4. For all x do 5. 6. 7. For all x do 8. 9. Else if d is an action data item u then For all x do 10. 11. Return Bel ’ (x) 12. 33

Bayes Filters are Fam iliar! ∫ = η Bel ( x ) P ( z | x ) P ( x | u , x ) Bel ( x ) dx − − − t t t t t t 1 t 1 t 1 Kalman filters Particle filters Hidden Markov models Dynamic Bayesian networks Partially Observable Markov Decision Processes (POMDPs) 34

Probabilistic Localization

Probabilistic Localization

Sum m ary Bayes rule allows us to compute probabilities that are hard to assess otherwise. Under the Markov assumption, recursive Bayesian updating can be used to efficiently combine evidence. Bayes filters are a probabilistic tool for estimating the state of dynamic systems. 37

Recommend

More recommend

Explore More Topics

Stay informed with curated content and fresh updates.