CIS 371 (Martin): Virtual Memory 1

CIS 371 Computer Organization and Design

Unit 9: Virtual Memory

Slides developed by Milo Martin & Amir Roth at the University of Pennsylvania with sources that included University of Wisconsin slides by Mark Hill, Guri Sohi, Jim Smith, and David Wood.

CIS 371 (Martin): Virtual Memory 2

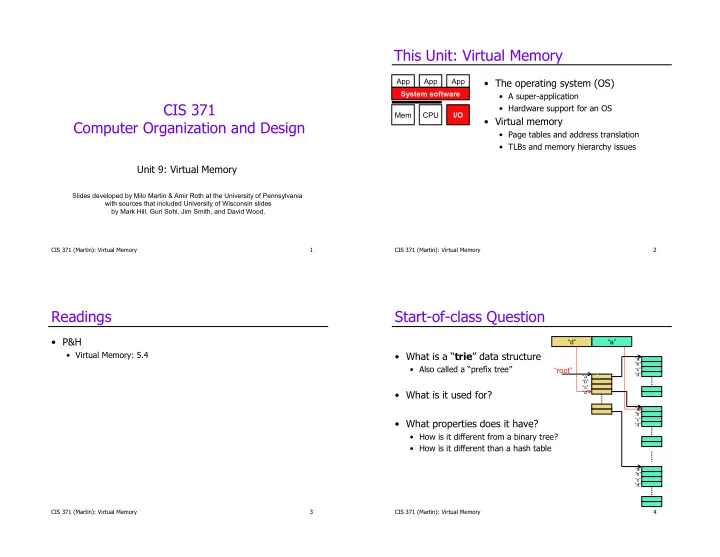

This Unit: Virtual Memory

- The operating system (OS)

- A super-application

- Hardware support for an OS

- Virtual memory

- Page tables and address translation

- TLBs and memory hierarchy issues

CPU Mem I/O System software App App App

CIS 371 (Martin): Virtual Memory 3

Readings

- P&H

- Virtual Memory: 5.4

Start-of-class Question

- What is a “trie” data structure

- Also called a “prefix tree”

- What is it used for?

- What properties does it have?

- How is it different from a binary tree?

- How is it different than a hash table

CIS 371 (Martin): Virtual Memory 4

A

“a” “d”

“root”

“a” “b” “c” “d” “a” “b” “c” “d” “a” “b” “c” “d” “a” “b” “c” “d”