PCA: algorithm

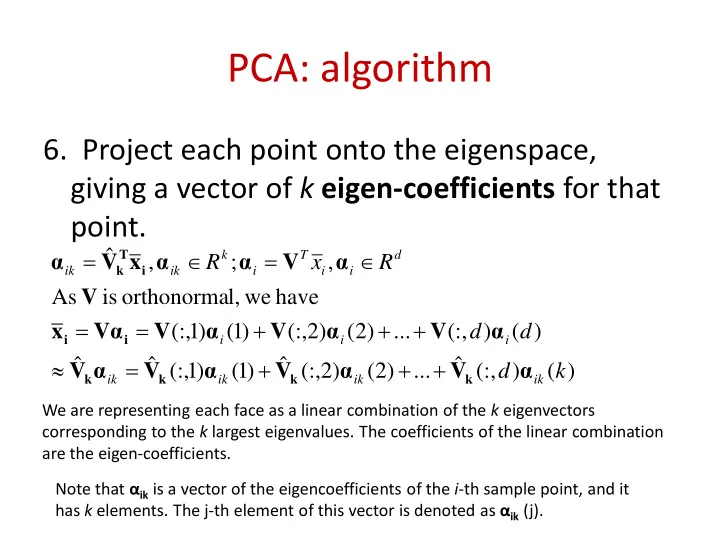

- 6. Project each point onto the eigenspace,

giving a vector of k eigen-coefficients for that point.

) ( ) (:, ˆ ... ) 2 ( ) 2 (:, ˆ ) 1 ( ) 1 (:, ˆ ˆ ) ( ) (:, ... ) 2 ( ) 2 (:, ) 1 ( ) 1 (:, have we l,

- rthonorma

is As , ; , ˆ k d d d R x R

ik ik ik ik i i i d i i T i k ik ik

α V α V α V α V α V α V α V Vα x V α V α α x V α

k k k k i i i T k

+ + + = ≈ + + + = = ∈ = ∈ =

We are representing each face as a linear combination of the k eigenvectors corresponding to the k largest eigenvalues. The coefficients of the linear combination are the eigen-coefficients. Note that αik is a vector of the eigencoefficients of the i-th sample point, and it has k elements. The j-th element of this vector is denoted as αik (j).