of Latent Structure in Natural Language Text Noah A. Smith Hertz - - PowerPoint PPT Presentation

of Latent Structure in Natural Language Text Noah A. Smith Hertz - - PowerPoint PPT Presentation

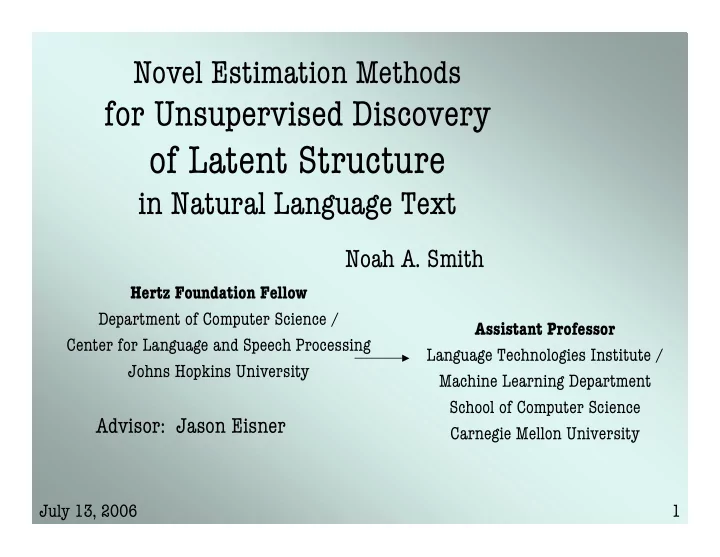

Novel Estimation Methods for Unsupervised Discovery of Latent Structure in Natural Language Text Noah A. Smith Hertz Foundation Fellow Department of Computer Science / Assistant Professor Center for Language and Speech Processing Language

Novel Estimation Methods for Unsupervised Discovery of Latent Structure in Natural Language Text Noah A. Smith Hertz Foundation Fellow Department of Computer Science / Assistant Professor Center for Language and Speech Processing Language Technologies Institute / Johns Hopkins University Machine Learning Department School of Computer Science Advisor: Jason Eisner Carnegie Mellon University July 13, 2006 1

Situating the Thesis • Too much information in the world! • Most information is represented linguistically. – Most of us can understand one language or more. • How can computers help? • Can NLP systems “build themselves”? July 13, 2006 2

Modern NLP Natural Language Processing Symbolic formalisms for elegance, efficiency, and intelligibility. Build models empirically from data; language learning and processing are inference. Machine Learning / Statistics Linguistics / Cognitive Science July 13, 2006 3

An Example: Parsing Sentence Dynamic Programming Algorithm Model Discrete Search Parse Tree July 13, 2006 4

Is Parsing Useful? • Speech recognition (Chelba & Jelinek, 1998) • Text correction (Shieber & Tao, 2003) • Machine translation (Chiang, 2005) • Information extraction (Viola and Narasimhan, 2005) • NL interfaces to databases (Zettlemoyer & Collins, 2005) Different parsers for different problems, and learning depends on the task. July 13, 2006 5

The Current Bottleneck • Empirical methods are great when you have enough of the right data. • Reliable unsupervised learning would let us more cheaply: – Build models for new domains – Train systems for new languages – Explore new representations (hidden structures) – Focus more on applications July 13, 2006 6

Central Practical Problem of the Thesis • How far can we get with Sentence unsupervised estimation? Dynamic Programming Algorithm Model Discrete Search Parse Tree July 13, 2006 7

Deeper Problem • How far can we get with Structured input unsupervised estimation? Structured input Structured input Structured input Structured input Model Structured output July 13, 2006 8

Outline of the Talk Learning Learning = Improving the Multilingual To Optimizing a Function Experiments Parse Function Improving the Chapter 7 Chapters 1, 2 Chapter 3 Optimizer •German Maximum Likelihood by EM Improving the •English Function and •Bulgarian the Optimizer •Mandarin Contrastive Estimation •Turkish Chapters 4, 5, 6 •Portuguese Deterministic Annealing Structural Annealing July 13, 2006 9

Dependency Parsing • Underlies many linguistic Applications: theories • Relation extraction • Simple model & algorithms Culotta & Sorenson (2004) (Eisner, 1996) • Machine translation • Projectivity constraint → Ding & Palmer (2005) context-free • Language modeling Chelba & Jelinek (1998) (cf. McDonald et al., 2005) • All kinds of lexical learning • Unsupervised learning: Lin & Pantel (2001), inter alia – Carroll & Charniak (1992) • Semantic role labeling – Yuret (1998) Carerras & Marquez (2004) – Paskin (2002) • Textual entailment – Klein & Manning (2004) Raina et al. (2005), inter alia July 13, 2006 10

A Dependency Tree July 13, 2006 11

Our Model A (“DMV”) • Expressible as a SCFG • Can be viewed as a log-linear model with these features: – Root tag is U. – Tag U has a child tag V in direction D. – Tag U has no children in direction D. – Tag U has at least one child in direction D. – Tag U has only one child in direction D. – Tag U has a non-first child in direction D. July 13, 2006 12

Example Derivation of the Model Root tag is VBZ. VBZ has a right child. VBZ has NN as right child. VBZ has only 1 right child. VBZ has a left child. VBZ has NNP as left child. VBZ has only 1 left child. NNP has a right child. NNP has CD as right child. NNP NNP CD VBZ DT NN IN JJ JJ NN Mr. Smith 39 retains the title of chief financial officer Klein & Manning, 2004 July 13, 2006 13

Stochastic and Log-linear CFGs sentence, tree � e � r ( ) p r � x , y = Context-Free derivation feature tokens r Grammar ( ) � r e f r x , y � = (production rules) rules r r r ( ) Rule weights ( ) � exp f x , y = � r r ( ) ( ) � def exp f x , y � ˙ ˙ ( ) = p � x , y r � W ˙ ˙ ( ) Z Model r Set of all sentences and their trees July 13, 2006 14

Model A is Very Simple! • Connected, directed trees over tags. – Tag-tag relationships O(n 5 ) naïve; – Affine valency model O(n 3 ) (Eisner & Satta, 1999) • No sister effects, even on same side of parent. • No grandparent effects. • No lexical selection, subcategorization, anything. • No distance effects. July 13, 2006 15

Evaluation Treebank tree (gold standard) ✔ ✖ ✖ ✔ ✖ ✖ ✖ ✖ ✔ hypothesis tree Accuracy = 3 / (3 + 6) = 33.3% July 13, 2006 16

Evaluation Treebank tree (gold standard) ✔ ✖ ✔ ✔ ✖ ✔ ✖ ✖ ✔ hypothesis tree Undirected Accuracy = 5 / (5 + 4) = 55.5% July 13, 2006 17

Fixed Grammar, Learned Weights Context-Free All dependency trees on all tag Grammar sequences can be derived. (production rules) How do we learn the weights? Rule weights r Model � July 13, 2006 18

Maximum Likelihood Estimation ( ) max p r � observed data r � Un supervised training: Supervised training: For PCFGs, “observed data” are “observed data” are closed form sentences with trees sentences solution � � n � � n � ( ) max p r � x i , y i � � ( ) � � max p r � x i , y � � r r � � � � � � � � i = 1 i = 1 y s e r i u q l a e c R i r Marginalize over trees e n Independence m o i u t a n z i m among examples i t p o July 13, 2006 19

Expectation-Maximization • Hillclimber for the likelihood function. • Quality of the estimate depends on the starting point. r ( ) p r x � r � Rule weights July 13, 2006 20

EM for Stochastic Grammars • E step Compute expected rule counts for each sentence: + [ ] ( ) c r � E p r ( ) f r x j , Y Dynamic � i Programming • M step Algorithm Renormalize counts into multinomial distributions. ) = log c r ( i + 1 ( ) � Z � r July 13, 2006 21

Experiment • WSJ10: 5300 part-of-speech sequences of length ≤ 10 • Words ignored, punctuation stripped • Three initializers: – Zero: all weights set to zero – K&M: Klein and Manning (2004), roughly – Local: Slight variation on K&M, more smoothed • 530 test sentences July 13, 2006 22

Experimental Results: MLE/EM Undirected Accuracy Cross- Accuracy Iterations (%) Entropy (%) Attach-Left 22.6 62.1 0 - Attach-Right 39.5 62.1 0 - Zero 22.7 58.8 49 26.07 MLE/EM K&M 41.7 62.1 62 25.16 Local 22.8 58.9 49 26.07 July 13, 2006 23

Dirichlet Priors for PCFG Multinomials • Simplest conceivable smoothing: add- λ • Slight change to M step : As if we saw each event an additional λ times. ) = log c r + � ( i + 1 ( ) � Z � r This is Maximum a Posteriori estimation, or “MLE with a prior.” How to pick λ ? July 13, 2006 24

Model Selection Rule weights λ Best on Rule weights λ development dataset … Rule weights Rule weights λ Supervised selection: best accuracy on annotated development data (presented in talk) Unsupervised selection: best likelihood on unannotated development data (given in thesis) July 13, 2006 25

Model Selection Rule weights λ Best on Rule weights λ development dataset … Rule weights Rule weights λ Advantages: • Can re-select later for different applications/datasets. Disadvantages: • Lots of models to train! • Still have to decide which λ values to train with. July 13, 2006 26

Experimental Results: MAP/EM Undirected Accuracy Cross- Accuracy Iterations (%) Entropy (%) Attach-Right 39.5 62.1 0 - Zero 22.7 58.8 49 26.07 MLE/EM K&M 41.7 62.1 62 25.16 Local 22.8 58.9 49 26.07 MAP/EM (sel. λ , 41.6 62.2 49 25.54 initializer) July 13, 2006 27

“Typical” Trees Treebank learned model July 13, 2006 28

Good and Bad News About Likelihood July 13, 2006 29

Selection over Random Initializers July 13, 2006 30

On Aesthetics Hyperparameters should be interpretable. Reasonable initializers should perform reasonably. These are a form of domain knowledge that should help, • not hurt performance. If all else fails, “Zero” (maxent) initializer should perform • well. Can we have both? July 13, 2006 31

Where are we? Learning Learning = Improving the To Optimizing a Function Parse Function July 13, 2006 32

Likelihood as Teacher Red leaves don’t hide blue jays. Mommy doesn’t love you. Dishwashers are a dime a dozen. Dancing granola doesn’t hide blue jays. July 13, 2006 33

Probability Allocation observed sentences Σ * July 13, 2006 34