Linux Kernel Synchronization System Calls Synchronization in - PDF document

3/1/20 COMP 790: OS Implementation COMP 790: OS Implementation Logical Diagram Binary Memory Threads Formats Allocators User Todays Lecture Linux Kernel Synchronization System Calls Synchronization in Kernel the kernel RCU File

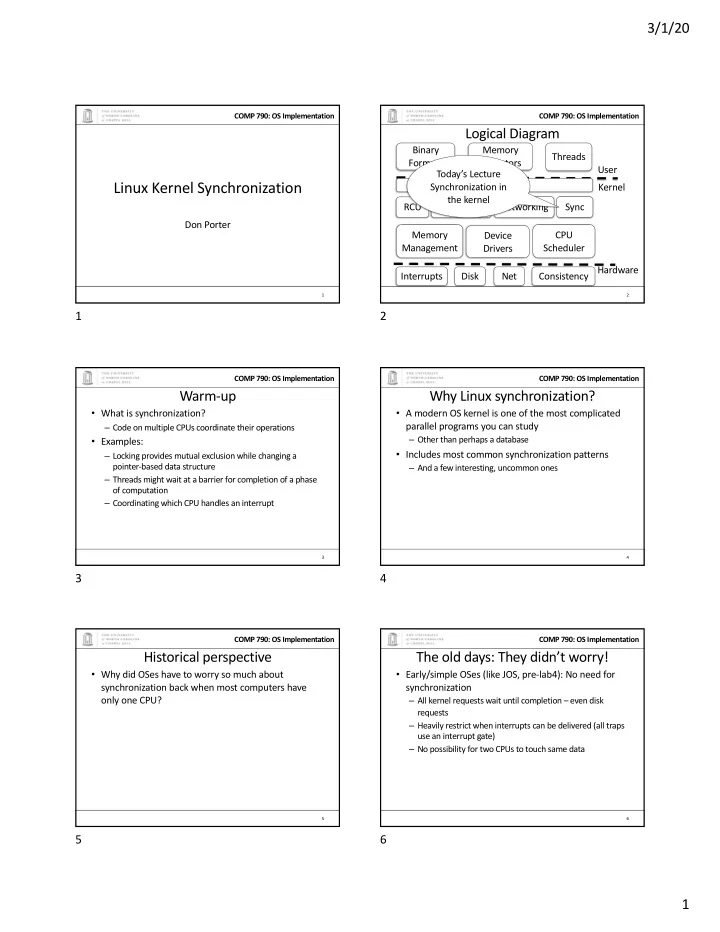

3/1/20 COMP 790: OS Implementation COMP 790: OS Implementation Logical Diagram Binary Memory Threads Formats Allocators User Today’s Lecture Linux Kernel Synchronization System Calls Synchronization in Kernel the kernel RCU File System Networking Sync Don Porter Memory CPU Device Management Scheduler Drivers Hardware Interrupts Disk Net Consistency 1 2 1 2 COMP 790: OS Implementation COMP 790: OS Implementation Warm-up Why Linux synchronization? • What is synchronization? • A modern OS kernel is one of the most complicated parallel programs you can study – Code on multiple CPUs coordinate their operations – Other than perhaps a database • Examples: • Includes most common synchronization patterns – Locking provides mutual exclusion while changing a pointer-based data structure – And a few interesting, uncommon ones – Threads might wait at a barrier for completion of a phase of computation – Coordinating which CPU handles an interrupt 3 4 3 4 COMP 790: OS Implementation COMP 790: OS Implementation Historical perspective The old days: They didn’t worry! • Why did OSes have to worry so much about • Early/simple OSes (like JOS, pre-lab4): No need for synchronization back when most computers have synchronization only one CPU? – All kernel requests wait until completion – even disk requests – Heavily restrict when interrupts can be delivered (all traps use an interrupt gate) – No possibility for two CPUs to touch same data 5 6 5 6 1

3/1/20 COMP 790: OS Implementation COMP 790: OS Implementation Slightly more recently A slippery slope • Optimize kernel performance by blocking inside the • We can enable interrupts during system calls kernel – More complexity, lower latency • Example: Rather than wait on expensive disk I/O, • We can block in more places that make sense block and schedule another process until it – Better CPU usage, more complexity completes – Cost: A bit of implementation complexity • Concurrency was an optimization for really fancy • Need a lock to protect against concurrent update to OSes, until… pages/inodes/etc. involved in the I/O • Could be accomplished with relatively coarse locks • Like the Big Kernel Lock (BKL) – Benefit: Better CPU utilitzation 7 8 7 8 COMP 790: OS Implementation COMP 790: OS Implementation The forcing function Performance Scalability • Multi-processing • How much more work can this software complete in a unit of time if I give it another CPU? – CPUs aren’t getting faster, just smaller – Same: No scalability---extra CPU is wasted – So you can put more cores on a chip • The only way software (including kernels) will get – 1 -> 2 CPUs doubles the work: Perfect scalability faster is to do more things at the same time • Most software isn’t scalable • Most scalable software isn’t perfectly scalable 9 10 9 10 COMP 790: OS Implementation COMP 790: OS Implementation Performance Scalability Performance Scalability (more visually intuitive) 12 0.45 Slope =1 == 0.4 perfect 10 1 / Execution Time (s) 0.35 Execution Time (s) scaling 8 0.3 Performance 0.25 6 Perfect Scalability Perfect Scalability 0.2 Not Scalable Not Scalable 4 0.15 Ideal: Time Somewhat scalable Somewhat scalable 0.1 2 halves with 0.05 2x CPUS 0 0 1 2 3 4 1 2 3 4 CPUs CPUs 11 12 11 12 2

3/1/20 COMP 790: OS Implementation COMP 790: OS Implementation Performance Scalability Coarse vs. Fine-grained locking (A 3 rd visual) • Coarse: A single lock for everything – Idea: Before I touch any shared data, grab the lock 35 – Problem: completely unrelated operations wait on each 30 Execution Time (s) * CPUs other 25 • Adding CPUs doesn’t improve performance 20 Perfect Scalability 15 Not Scalable 10 Somewhat scalable 5 Slope = 0 == 0 perfect 1 2 3 4 scaling CPUs 13 14 13 14 COMP 790: OS Implementation COMP 790: OS Implementation Fine-grained locking Current Reality • Fine-grained locking: Many “little” locks for individual data structures Fine-Grained Locking – Goal: Unrelated activities hold different locks • Hence, adding CPUs improves performance Performance – Cost: complexity of coordinating locks Course-Grained Locking Complexity ò Unsavory trade-off between complexity and performance scalability 15 16 15 16 COMP 790: OS Implementation COMP 790: OS Implementation How do locks work? Atomic instructions • Two key ingredients: • A “normal” instruction can span many CPU cycles – A hardware-provided atomic instruction – Example: ‘a = b + c’ requires 2 loads and a store • Determines who wins under contention – These loads and stores can interleave with other CPUs’ – A waiting strategy for the loser(s) memory accesses • An atomic instruction guarantees that the entire operation is not interleaved with any other CPU – x86: Certain instructions can have a ‘lock’ prefix – Intuition: This CPU ‘locks’ all of memory – Expensive! Not ever used automatically by a compiler; must be explicitly used by the programmer 17 18 17 18 3

3/1/20 COMP 790: OS Implementation COMP 790: OS Implementation Atomic instruction examples Atomic instructions + locks • Atomic increment/decrement ( x++ or x--) • Most lock implementations have some sort of counter – Used for reference counting – Some variants also return the value x was set to by this • Say initialized to 1 instruction (useful if another CPU immediately changes the • To acquire the lock, use an atomic decrement value) – If you set the value to 0, you win! Go ahead • Compare and swap – If you get < 0, you lose. Wait L – if (x == y) x = z; – Atomic decrement ensures that only one CPU will – Used for many lock-free data structures decrement the value to zero • To release, set the value back to 1 19 20 19 20 COMP 790: OS Implementation COMP 790: OS Implementation Waiting strategies Which strategy to use? • Spinning: Just poll the atomic counter in a busy loop; • Main consideration: Expected time waiting for the when it becomes 1, try the atomic decrement again lock vs. time to do 2 context switches – If the lock will be held a long time (like while waiting for • Blocking: Create a kernel wait queue and go to sleep, disk I/O), blocking makes sense yielding the CPU to more useful work – If the lock is only held momentarily, spinning makes sense – Winner is responsible to wake up losers (in addition to • Other, subtle considerations we will discuss later setting lock variable to 1) – Create a kernel wait queue – the same thing used to wait on I/O • Note: Moving to a wait queue takes you out of the scheduler’s run queue 21 22 21 22 COMP 790: OS Implementation COMP 790: OS Implementation Linux lock types Linux spinlock (simplified) • Blocking: mutex, semaphore • Non-blocking: spinlocks, seqlocks, completions 1: lock; decb slp->slock // Locked decrement of lock var jns 3f // Jump if not set (result is zero) to 3 2: pause // Low power instruction, wakes on // coherence event // Read the lock value, compare to zero cmpb $0,slp->slock // If less than or equal (to zero), goto 2 jle 2b jmp 1b // Else jump to 1 and try again 3: // We win the lock 23 24 23 24 4

3/1/20 COMP 790: OS Implementation COMP 790: OS Implementation Rough C equivalent Why 2 loops? while (0 != atomic_dec(&lock->counter)) { • Functionally, the outer loop is sufficient do { • Problem: Attempts to write this variable invalidate it in all other caches // Pause the CPU until some coherence – If many CPUs are waiting on this lock, the cache line will // traffic (a prerequisite for the counter bounce between CPUs that are polling its value // changing) saving power • This is VERY expensive and slows down EVERYTHING on the system – The inner loop read-shares this cache line, allowing all } while (lock->counter <= 0); polling in parallel } • This pattern called a Test&Test&Set lock (vs. Test&Set) 25 26 25 26 COMP 790: OS Implementation COMP 790: OS Implementation Test & Set Lock Test & Test & Set Lock // Has lock while (!atomic_dec(&lock->counter)) // Has lock while (lock->counter <= 0)) CPU 0 CPU 1 CPU 2 CPU 0 Unlock by CPU 1 CPU 2 Write Back+Evict writing 1 Cache Line atomic_dec atomic_dec read read Cache Cache Cache Cache 0x1000 0x1000 Memory Bus Memory Bus 0x1000 0x1000 RAM RAM Cache Line “ping-pongs” back and forth Line shared in read mode until unlocked 27 28 27 28 COMP 790: OS Implementation COMP 790: OS Implementation Why 2 loops? Reader/writer locks • Functionally, the outer loop is sufficient • Simple optimization: If I am just reading, we can let other readers access the data at the same time • Problem: Attempts to write this variable invalidate it – Just no writers in all other caches • Writers require mutual exclusion – If many CPUs are waiting on this lock, the cache line will bounce between CPUs that are polling its value • This is VERY expensive and slows down EVERYTHING on the system – The inner loop read-shares this cache line, allowing all polling in parallel • This pattern called a Test&Test&Set lock (vs. Test&Set) 29 30 29 30 5

Recommend

More recommend

Explore More Topics

Stay informed with curated content and fresh updates.