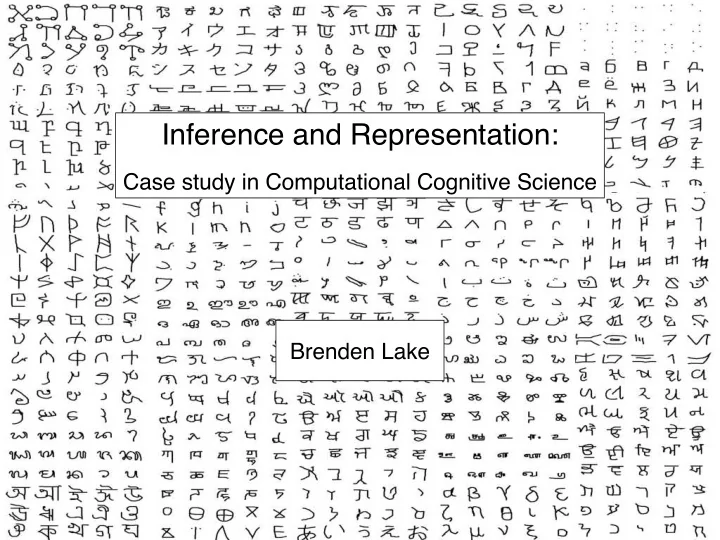

Inference and Representation:

- Case study in Computational Cognitive Science

Inference and Representation: Case study in Computational - - PowerPoint PPT Presentation

Inference and Representation: Case study in Computational Cognitive Science Brenden Lake Learning classifiers in cognitive science concept learning classification = (cognitive science (data science & &

generating new concepts generating new examples parsing

“one-shot learning”

Sanskrit Tagalog Latin Braille Balinese Hebrew

Angelic Alphabet of the Magi Futurama ULOG

1 2 1 2 1 2 1 2 1 2 1 2 3 1 2 3 4 5 6 7 1 2 1 2 1 2 1 2 3 4 5 67 8 1 2 1 2 1 2 1 2 1 2 1 2 3 1 2 1 2 1 2

Original Image 20 People’s Strokes Stroke order:

1 2 3 4 1 2 3 1 2 3 4 1 2 3 1 2 3 4 12 3 4 1 2 1 2 1 2 1 2 3 4 1 2 3 4 1 2 3 4 1 2 3 4 12 3 4 1 2 3 4 1 2 3 1 2 3 1 2 3 4 1 2 3 4 1 2

Original Image 20 People’s Strokes

12 3 45 6 7 1 2 3 4 5 6 7 1 23 4 5 6 7 1 2 3 4 5 1 2 3 4 5 6 7 89 10 11 1 2 3 4 5 6 7 8 1 2 3 4 5 6 7 8 1 2 3 4 5 6 7 1 2 3 4 56 7 8 1 2 3 4 5 6 7 8 9 1 2 3 4 5 6 7 8 9 1 2 3 4 5 6 7 12 34 5 6 7 8 1 2 3 4 5 6 7 8 1 2 3 4 5 6 7 8 1 2 3 4 5 6 7 8 1 2 3 4 5 6 7 1 2 3 4 5 6 7 12 3 4 5 6 7 8 1 2 3 4 5 6 7 8 9 10 11

Original Image 20 People’s Strokes

generating new concepts generating new examples parsing

“one-shot learning”

generating new examples generating new concepts parsing

1 2 3

iv)

relation: attached along relation: attached along relation: attached at start

v) exemplars vi) raw data iv) object template iii) parts ii) sub-parts i) primitives

A B

type level token level

generating new examples generating new concepts parsing

1 2 3

iv)

generating new examples

Which grid is produced by the model? A B A B A B A B

Which grid is produced by the model? A B A B A B A B

Which grid is produced by the model? A B A B A B A B

Which grid is produced by the model? A B A B A B A B

generating new examples generating new concepts parsing

1 2 3

iv)

3 seconds remaining generating new concepts

iv)

A B A B A B

Which grid is produced by the model?

A B A B A B

Which grid is produced by the model?

A B A B A B

Which grid is produced by the model?

A B A B A B

Which grid is produced by the model?

New machine-generated characters in each alphabet Alphabet of characters Alphabet of characters New machine-generated characters in each alphabet

connected at relation connected at relation

exemplars raw data

parts sub-parts primitives (1D curvelets, 2D patches, 3D geons, actions, sounds, etc.)

latent variables raw binary image

Bayes’ rule

renderer prior on parts, relations, etc.

connected at relation connected at relation

parts sub-parts primitives (1D curvelets, 2D patches, 3D geons, actions, sounds, etc.)

Sample number of parts Sample number of sub-parts Sample sequence of sub-parts Sample relation Return handle to a stochastic program

Sample part’s ! start location

i

i

i

i

1

i−1 )

i

i

i

Add motor variance Compose a part’s pen trajectory Sample affine transform Render and sample the binary image

learned action primitives learned primitive transitions

seed primitive

1250 primitives scale selective translation invariant

1 2 3 4 5 6 7 8 9 10 1000 2000 3000 4000 5000 Number of strokes frequency

number of strokes

1 3

≥ 3 1 2 Start position for strokes in each position

stroke start positions

Stroke

global transformations

1 2 3 4 5 6 7 8 910 0.5 1 1 2 3 4 5 6 7 8 910 0.5 1 1 2 3 4 5 6 7 8 910 0.5 1 1 2 3 4 5 6 7 8 910 0.5 1 1 2 3 4 5 6 7 8 910 0.5 1 1 2 3 4 5 6 7 8 910 0.5 1 1 2 3 4 5 6 7 8 910 0.5 1 1 2 3 4 5 6 7 8 910 0.5 1 1 2 3 4 5 6 7 8 910 0.5 1 1 2 3 4 5 6 7 8 910 0.5 1

number of sub-strokes probability Number of sub-strokes for a character with κ strokes

κ = 1 κ = 2 κ = 3 κ = 4 κ = 5 κ = 6 κ = 7 κ = 8 κ = 9 κ = 10

number of sub-strokes for a character with strokes

relations between strokes

1 2 1 2 1 2 1 2

independent (34%) attached at start (5%) attached at end (11%) attached along (50%)

latent variables raw binary image

connected relation

raw data

strokes sub-strokes

Bayes’ rule such that Discrete (K=5) approximation to posterior

i=1 wiδ(θ − θ[i])

i=1 wi

primitives

renderer prior on programs

Intuition: Fit strokes to the observed pixels as closely as possible, with these constraints:

Binary image Thinned image

planning cleaned

Step 1: characters as undirected graphs Step 2: guided random parses

1 2 3 1 2 3 1 2 1 2 3 4 1 2 3 4 1 2 3 4 1 2 3 4 1 2 3 1 2 3 4 1 2 3 4 5 1 2 3 4 1 2 3 1 2 1 2 3 4 1 2 3 4 1 2 3 4 5 1 2 3 4 5 1 2 3 4 5 1 2 3 1 2 3 4

1 2 3

−531

1 2 3

−560

1 2 3

−568

1 2 3

−582

1 2 3

−588

log-probability

Step 3: Top-down fitting with gradient-based optimization more likely

a) b) c)

less likely

−758

12

−1.88e+03

1

**−778

1 2

Class 1

−1.88e+03

1

Class 2 Which class is image I in?

Generating new concepts (unconstrained)

Alphabet Human or Machine?

Generating new concepts (from type)

Alphabet Human or Machine?

49% ID Level 51% ID Level

4.5% human error rate 3.2% machine error rate

One-shot classification (20-way)

Human or Machine?

Generating new examples

51% Identification (ID) Level

[% judges who correctly ID machine vs. human]

Human or Machine? Human or Machine?

Generating new examples (dynamic)

59% ID Level

Human

People BPL Lesion (no compositionality) BPL BPL Lesion (no learning-to-learn)

Deep Siamese Convnet Hierarchical Deep Deep Convnet Bayesian Program Learning models Deep Learning models (no causality)

Classification error rate Identification (ID) Level! (% judges who correctly ID machine vs. human)

One-shot classification! (20-way)

new exemplars new exemplars (dynamic) new concepts (from type) new concepts (unconstrained) 40 45 50 55 60 65 70 75 80 85

Generating! new exemplars Generating new! exemplars (dynamic) Generating new! concepts (from type) Generating new! concepts (unconstrained)

chance

Classification error rate Identification (ID) Level! (% judges who correctly ID machine vs. human)

One-shot classification! (20-way)

new exemplars new exemplars (dynamic) new concepts (from type) new concepts (unconstrained) 40 45 50 55 60 65 70 75 80 85

Generating! new exemplars Generating new! exemplars (dynamic) Generating new! concepts (from type) Generating new! concepts (unconstrained)

People BPL Lesion (no compositionality) BPL BPL Lesion (no learning-to-learn)

Deep Siamese Convnet Hierarchical Deep Deep Convnet Bayesian Program Learning models Deep Learning models (no causality)

chance

Classification error rate Identification (ID) Level! (% judges who correctly ID machine vs. human)

One-shot classification! (20-way)

new exemplars new exemplars (dynamic) new concepts (from type) new concepts (unconstrained) 40 45 50 55 60 65 70 75 80 85

Generating! new exemplars Generating new! exemplars (dynamic) Generating new! concepts (from type) Generating new! concepts (unconstrained)

People BPL Lesion (no compositionality) BPL BPL Lesion (no learning-to-learn)

Deep Siamese Convnet Hierarchical Deep Deep Convnet Bayesian Program Learning models Deep Learning models (no causality)

chance

Classification error rate Identification (ID) Level! (% judges who correctly ID machine vs. human)

One-shot classification! (20-way)

new exemplars new exemplars (dynamic) new concepts (from type) new concepts (unconstrained) 40 45 50 55 60 65 70 75 80 85

Generating! new exemplars Generating new! exemplars (dynamic) Generating new! concepts (from type) Generating new! concepts (unconstrained)

People BPL Lesion (no compositionality) BPL BPL Lesion (no learning-to-learn)

Deep Siamese Convnet Hierarchical Deep Deep Convnet Bayesian Program Learning models Deep Learning models (no causality)

chance

generating ! new examples generating ! new concepts parsing

1 2 3

iv)