1

- 02 Information theory

02.04 Special encodings

- Error models

- Parity bits

- Hamming codes

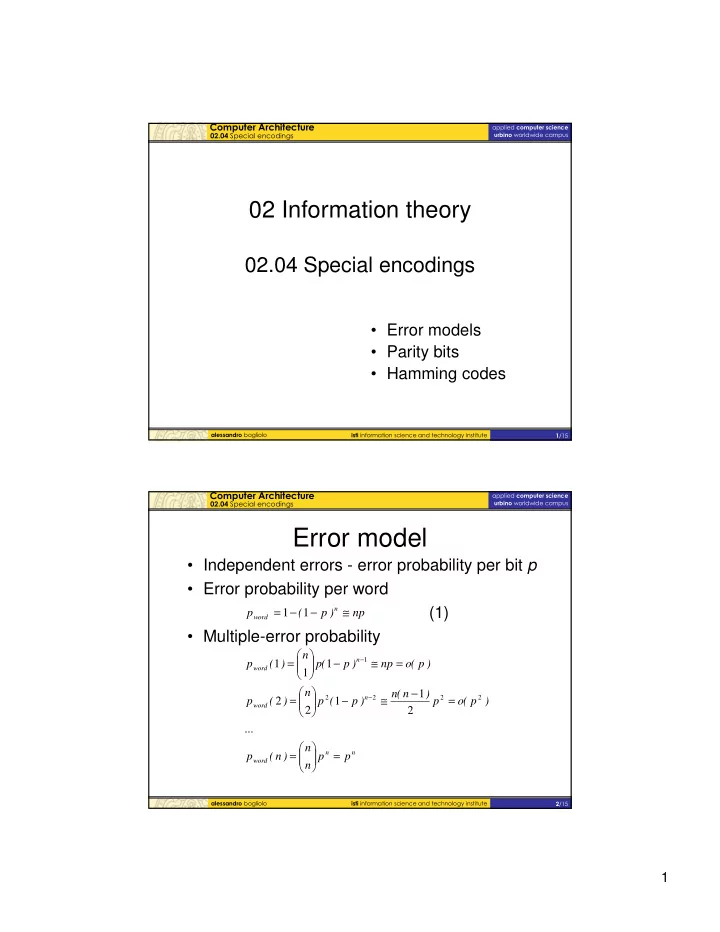

- Error model

- Independent errors - error probability per bit p

- Error probability per word

(1)

- Multiple-error probability

np ) p ( p

n word

≅ − − = 1 1

n n word n word n word

p p n n ) n ( p ... ) p (

- p

) n ( n ) p ( p n ) ( p ) p (

- np

) p ( p n ) ( p =

- =

= − ≅ −

- =

= ≅ −

- =

− − 2 2 2 2 1

2 1 1 2 2 1 1 1