CISC 4631 Data Mining Lecture 11: Neural Networks Biological - PowerPoint PPT Presentation

CISC 4631 Data Mining Lecture 11: Neural Networks Biological Motivation Can we simulate the human learning process? Two schools modeling biological learning process obtain highly effective algorithms, independent of whether

CISC 4631 Data Mining Lecture 11: Neural Networks

Biological Motivation • Can we simulate the human learning process? Two schools • modeling biological learning process • obtain highly effective algorithms, independent of whether these algorithms mirror biological processes (this course) • Biological learning system (brain) – complex network of neurons – ANN are loosely motivated by biological neural systems. However, many features of ANNs are inconsistent with biological systems 2

Neural Speed Constraints • Neurons have a “switching time” on the order of a few milliseconds, compared to nanoseconds for current computing hardware. • However, neural systems can perform complex cognitive tasks (vision, speech understanding) in tenths of a second. • Only time for performing 100 serial steps in this time frame, compared to orders of magnitude more for current computers. • Must be exploiting “massive parallelism.” • Human brain has about 10 11 neurons with an average of 10 4 connections each. 3

Artificial Neural Networks (ANN) • ANN – network of simple units – real-valued inputs & outputs • Many neuron-like threshold switching units • Many weighted interconnections among units • Highly parallel, distributed process • Emphasis on tuning weights automatically 4

Neural Network Learning • Learning approach based on modeling adaptation in biological neural systems. • Perceptron: Initial algorithm for learning simple neural networks (single layer) developed in the 1950’s. • Backpropagation: More complex algorithm for learning multi-layer neural networks developed in the 1980’s. 5

Real Neurons 6

How Does our Brain Work? • A neuron is connected to other neurons via its input and output links • Each incoming neuron has an activation value and each connection has a weight associated with it • The neuron sums the incoming weighted values and this value is input to an activation function • The output of the activation function is the output from the neuron 7

Neural Communication • Electrical potential across cell membrane exhibits spikes called action potentials. • Spike originates in cell body, travels down axon, and causes synaptic terminals to release neurotransmitters. • Chemical diffuses across synapse to dendrites of other neurons. • Neurotransmitters can be excititory or inhibitory. • If net input of neurotransmitters to a neuron from other neurons is excititory and exceeds some threshold, it fires an 8 action potential.

Real Neural Learning • To model the brain we need to model a neuron • Each neuron performs a simple computation – It receives signals from its input links and it uses these values to compute the activation level (or output) for the neuron. – This value is passed to other neurons via its output links. 9

Prototypical ANN • Units interconnected in layers – directed, acyclic graph (DAG) • Network structure is fixed – learning = weight adjustment – backpropagation algorithm 10

Appropriate Problems • Instances: vectors of attributes – discrete or real values • Target function – discrete, real, vector – ANNs can handle classification & regression • Noisy data • Long training times acceptable • Fast evaluation • No need to be readable – It is almost impossible to interpret neural networks except for the simplest target functions 11

Perceptrons • The perceptron is a type of artificial neural network which can be seen as the simplest kind of feedforward neural network: a linear classifier • Introduced in the late 50s • Perceptron convergence theorem (Rosenblatt 1962): – Perceptron will learn to classify any linearly separable set of inputs. Perceptron is a network: – single-layer – feed-forward: data only travels in one direction 12 XOR function (no linear separation)

ALVINN drives 70 mph on highways See Alvinn video Alvinn Video 13

Artificial Neuron Model • Model network as a graph with cells as nodes and synaptic connections as weighted edges from node i to node j , w ji 1 • Model net input to cell as w 12 w 16 w 15 net w o w 13 w 14 j ji i i 2 3 4 5 6 • Cell output is: 0 if net T o j j j o j 1 if net T 1 i j ( T j is threshold for unit j ) 0 T j net j 14

Perceptron: Artificial Neuron Model Model network as a graph with cells as nodes and synaptic connections as weighted edges from node i to node j , w ji The input value received of a neuron is calculated by summing the weighted input n values from its input links w i x i i 0 threshold threshold function Vector notation: 15

Different Threshold Functions 1 , w x 0 1 , w x 0 o ( x ) o ( x ) 1 , w x 0 1 , w x 0 1 , w x t 1 , w x 0 o ( x ) o ( x ) 0 , otherwise 1 , w x 0 We should learn the weight w 1 ,…, w n 16

Examples (step activation function) In1 In2 Out In1 In2 Out In Out 0 0 0 0 0 0 0 1 0 1 0 0 1 1 1 0 1 0 0 1 0 1 1 1 1 1 1 1 n w i x w 0 – t i 17 i 0

Neural Computation • McCollough and Pitts (1943) showed how such model neurons could compute logical functions and be used to construct finite-state machines • Can be used to simulate logic gates: – AND: Let all w ji be T j / n, where n is the number of inputs. – OR: Let all w ji be T j – NOT: Let threshold be 0, single input with a negative weight. • Can build arbitrary logic circuits, sequential machines, and computers with such gates 18

Perceptron Training • Assume supervised training examples giving the desired output for a unit given a set of known input activations. • Goal: learn the weight vector (synaptic weights) that causes the perceptron to produce the correct +/- 1 values • Perceptron uses iterative update algorithm to learn a correct set of weights – Perceptron training rule – Delta rule • Both algorithms are guaranteed to converge to somewhat different acceptable hypotheses, under somewhat different conditions 19

Perceptron Training Rule • Update weights by: w w w i i i ( w t o ) w i i where η is the learning rate • a small value (e.g., 0.1) • sometimes is made to decay as the number of weight-tuning operations increases t – target output for the current training example o – linear unit output for the current training example 20

Perceptron Training Rule • Equivalent to rules: – If output is correct do nothing. – If output is high, lower weights on active inputs – If output is low, increase weights on active inputs • Can prove it will converge – if training data is linearly separable – and η is sufficiently small 21

Perceptron Learning Algorithm • Iteratively update weights until convergence. Initialize weights to random values Until outputs of all training examples are correct For each training pair, E , do: Compute current output o j for E given its inputs Compare current output to target value, t j , for E Update synaptic weights and threshold using learning rule • Each execution of the outer loop is typically called an epoch . 22

Delta Rule • Works reasonably with data that is not linearly separable • Minimizes error • Gradient descent method – basis of Backpropagation method – basis for methods working in multidimensional continuous spaces – Discussion of this rule is beyond the scope of this course 23

Perceptron as a Linear Separator • Since perceptron uses linear threshold function it searches for a linear separator that discriminates the classes o 3 w o w o T 12 2 13 3 1 ?? w T o 12 o 1 3 2 w w 13 13 Or hyperplane in n -dimensional space o 2 24

Concept Perceptron Cannot Learn • Cannot learn exclusive-or (XOR), or parity function in general o 3 1 + – ?? – + 0 o 2 1 25

General Structure of an ANN x 1 x 2 x 3 x 4 x 5 Input Layer Input Neuron i Output I 1 w i1 Activation w i2 S i O i I 2 O i function w i3 Hidden g(S i ) I 3 Layer threshold, t Output Perceptrons have no hidden layers Layer Multilayer perceptrons may have many y 26

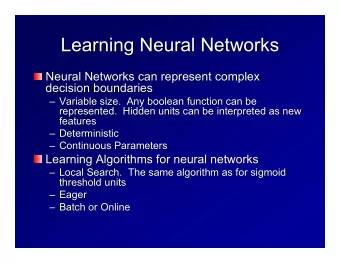

Learning Power of an ANN • Perceptron is guaranteed to converge if data is linearly separable – It will learn a hyperplane that separates the classes – The XOR function on page 250 TSK is not linearly separable • A mulitlayer ANN has no such guarantee of convergence but can learn functions that are not linearly separable • An ANN with a hidder layer can learn the XOR function by constructing two hyperplanes (see page 253) 27

Multilayer Network Example The decision surface is highly nonlinear 28

Sigmoid Threshold Unit • Sigmoid is a unit whose output is a nonlinear function of its inputs, but whose output is also a differentiable function of its inputs • We can derive gradient descent rules to train – Sigmoid unit – Multilayer networks of sigmoid units backpropagation 29

Recommend

More recommend

Explore More Topics

Stay informed with curated content and fresh updates.