Sampling Techniques for Probabilistic and Deterministic Graphical - PowerPoint PPT Presentation

Sampling Techniques for Probabilistic and Deterministic Graphical models ICS 276, Spring 2018 Bozhena Bidyuk Rina Dechter Reading Darwiche chapter 15, related papers slides11b 828X 2019 Algorithms for Reasoning with graphical models

Sampling Techniques for Probabilistic and Deterministic Graphical models ICS 276, Spring 2018 Bozhena Bidyuk Rina Dechter Reading” Darwiche chapter 15, related papers slides11b 828X 2019

Algorithms for Reasoning with graphical models Slides Set 11(part b): Sampling Techniques for Probabilistic and Deterministic Graphical models Rina Dechter (Reading” Darwiche chapter 15, cutset‐sampling paper posted) slides11b 828X 2019

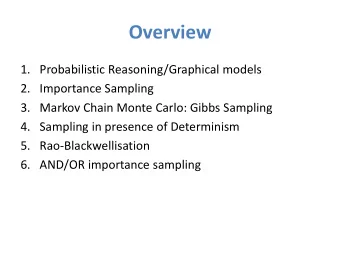

Overview 1. Probabilistic Reasoning/Graphical models 2. Importance Sampling 3. Markov Chain Monte Carlo: Gibbs Sampling 4. Sampling in presence of Determinism 5. Rao‐Blackwellisation 6. AND/OR importance sampling slides11b 828X 2019

Markov Chain x 1 x 2 x 3 x 4 • A Markov chain is a discrete random process with the property that the next state depends only on the current state ( Markov Property ) : 1 2 1 1 ( t | , ,..., t ) ( t | t ) P x x x x P x x • If P(X t |x t‐1 ) does not depend on t ( time homogeneous ) and state space is finite, then it is often expressed as a transition function (aka transition matrix ) ( ) 1 P X x x slides11b 828X 2019

Example: Drunkard’s Walk • a random walk on the number line where, at each step, the position may change by +1 or −1 with equal probability 1 2 1 2 3 ( 1 ) ( 1 ) P n P n ( ) { 0 , 1 , 2 ,...} D X 0 . 5 0 . 5 n transition matrix P(X) slides11b 828X 2019

Example: Weather Model rain rain rain sun rain ( ) { , } D X rainy sunny ( ) ( ) P rainy P sunny 0 . 9 0 . 1 rainy 0 . 5 0 . 5 sunny transition matrix P(X) slides11b 828X 2019

Multi‐Variable System { , , }, ( ) , X X X X D X discrete finite 1 2 3 i • state is an assignment of values to all the variables t t+1 x 1 x 1 t t+1 x 2 x 2 t t+1 x 3 x 3 x t { t , t ,..., t } x x x 1 2 n slides11b 828X 2019

Bayesian Network System • Bayesian Network is a representation of the joint probability distribution over 2 or more variables t t+1 X 1 x 1 X 1 t t+1 X 2 x 2 X 2 X 3 t t+1 X 3 x 3 X { , , } x X X X t { t , t , t } x x x 1 2 3 1 2 3 slides11b 828X 2019

Stationary Distribution Existence • If the Markov chain is time‐homogeneous, then the vector (X) is a stationary distribution (aka invariant or equilibrium distribution, aka “fixed point”), if its entries sum up to 1 and satisfy: ( ) ( ) ( | ) x x P x x i j i j ( ) x D X i • Finite state space Markov chain has a unique stationary distribution if and only if: – The chain is irreducible – All of its states are positive recurrent slides11b 828X 2019

Irreducible • A state x is irreducible if under the transition rule one has nonzero probability of moving from x to any other state and then coming back in a finite number of steps • If one state is irreducible, then all the states must be irreducible (Liu, Ch. 12, pp. 249, Def. 12.1.1) slides11b 828X 2019

Recurrent • A state x is recurrent if the chain returns to x with probability 1 • Let M( x ) be the expected number of steps to return to state x • State x is positive recurrent if M( x ) is finite The recurrent states in a finite state chain are positive recurrent . slides11b 828X 2019

Stationary Distribution Convergence • Consider infinite Markov chain: ( ) 0 0 n ( n | ) n P P x x P P • If the chain is both irreducible and aperiodic , then: ( ) lim n P n • Initial state is not important in the limit “The most useful feature of a “good” Markov chain is its fast forgetfulness of its past…” (Liu, Ch. 12.1) slides11b 828X 2019

Aperiodic • Define d(i) = g.c.d.{n > 0 | it is possible to go from i to i in n steps}. Here, g.c.d. means the greatest common divisor of the integers in the set. If d(i)=1 for i , then chain is aperiodic • Positive recurrent, aperiodic states are ergodic slides11b 828X 2019

Markov Chain Monte Carlo • How do we estimate P(X) , e.g., P(X|e) ? • Generate samples that form Markov Chain with stationary distribution =P(X|e) • Estimate from samples (observed states): visited states x 0 ,…,x n can be viewed as “samples” from distribution 1 T ( ) ( , t ) x x x T 1 t lim ( ) x T slides11b 828X 2019

MCMC Summary • Convergence is guaranteed in the limit • Initial state is not important, but… typically, we throw away first K samples ‐ “ burn‐in ” • Samples are dependent, not i.i.d. • Convergence ( mixing rate ) may be slow • The stronger correlation between states, the slower convergence! slides11b 828X 2019

Gibbs Sampling (Geman&Geman,1984) • Gibbs sampler is an algorithm to generate a sequence of samples from the joint probability distribution of two or more random variables • Sample new variable value one variable at a time from the variable’s conditional distribution: ( ) ( | t ,.., t , t ,..., t } ( | t \ ) P X P X x x x x P X x x 1 1 1 i i i i n i i • Samples form a Markov chain with stationary distribution P(X|e) slides11b 828X 2019

Gibbs Sampling: Illustration The process of Gibbs sampling can be understood as a random walk in the space of all instantiations of X=x (remember drunkard’s walk): In one step we can reach instantiations that differ from current one by value assignment to at most one variable (assume randomized choice of variables X i ). slides11b 828X 2019

Ordered Gibbs Sampler Generate sample x t+1 from x t : 1 t ( | t , t ,..., t , ) X x P X x x x e 1 1 1 2 3 Process N 1 1 All t ( | t , t ,..., t , ) X x P X x x x e 2 2 2 1 3 N Variables ... In Some Order 1 1 1 1 t ( | t , t ,..., t , ) X x P X x x x e 1 2 1 N N N N In short, for i=1 to N: 1 t sampled from ( | t \ , ) X x P X x x e i i i i slides11b 828X 2019

Transition Probabilities in BN Given Markov blanket (parents, children, and their parents), X i is independent of all other nodes X i Markov blanket : ( ) ( ) markov X pa ch pa U U U i i i j X j ch j ( | t \ ) ( | t ) : P X x x P X markov i i i i ( | t \ ) ( | ) ( | ) P x x x P x pa P x pa i i i i j j X j ch i Computation is linear in the size of Markov blanket! slides11b 828X 2019

Ordered Gibbs Sampling Algorithm (Pearl,1988) Input: X, E=e Output: T samples {x t } Fix evidence E=e, initialize x 0 at random 1. For t = 1 to T (compute samples) 2. For i = 1 to N (loop through variables) t+1 P(X i | markov i t ) 3. x i 4. End For 5. End For slides11b 828X 2019

Gibbs Sampling Example ‐ BN { , ,..., }, { } X X X X E X 1 2 9 9 X 1 = x 1 0 X1 X3 X6 X 6 = x 6 0 X 2 = x 2 0 X2 X5 X8 X 7 = x 7 0 X 3 = x 3 0 X 8 = x 8 X9 0 X4 X7 X 4 = x 4 0 X 5 = x 5 0 slides11b 828X 2019

Gibbs Sampling Example ‐ BN { , ,..., }, { } X X X X E X 1 2 9 9 X1 X3 X6 x 1 0 0 ( | ,..., , ) P X x x x 1 1 2 8 9 X2 X5 X8 x 1 1 0 ( | ,..., , ) P X x x x 2 2 1 8 9 X9 X4 X7 slides11b 828X 2019

Answering Queries P(x i |e) = ? • Method 1 : count # of samples where X i = x i ( histogram estimator ): Dirac delta f-n 1 T ( ) ( , ) t P X x x x i i i T 1 t • Method 2 : average probability ( mixture estimator ): 1 T ( ) ( | t ) P X x P X x markov i i i i i T 1 t • Mixture estimator converges faster (consider estimates for the unobserved values of X i ; prove via Rao‐Blackwell theorem) slides11b 828X 2019

Rao‐Blackwell Theorem Rao‐Blackwell Theorem: Let random variable set X be composed of two groups of variables, R and L. Then, for the joint distribution (R,L) and function g, the following result applies [ { ( ) | } [ ( )] Var E g R L Var g R for a function of interest g, e.g., the mean or covariance ( Casella&Robert,1996, Liu et. al. 1995 ). • theorem makes a weak promise, but works well in practice! • improvement depends on the choice of R and L slides11b 828X 2019

Importance vs. Gibbs ˆ t ( | ) x P X e Gibbs: ˆ ( | ) T ( | ) P X e P X e 1 T ˆ ( ) ( t ) g X g x T 1 t Importance: t ( | ) w t X Q X e 1 ( t ) ( t ) T g x P x g ( ) t T Q x 1 t slides11b 828X 2019

Gibbs Sampling: Convergence • Sample from P(X|e) P(X|e) • Converges iff chain is irreducible and ergodic • Intuition ‐ must be able to explore all states: – if X i and X j are strongly correlated, X i =0 X j =0, then, we cannot explore states with X i =1 and X j =1 • All conditions are satisfied when all probabilities are positive • Convergence rate can be characterized by the second eigen‐value of transition matrix slides11b 828X 2019

Recommend

More recommend

Explore More Topics

Stay informed with curated content and fresh updates.

![CS786 Lecture 13: May 14, 2012 Sampling techniques [KF Chapter 12] CS786 P. Poupart 2012 1](https://c.sambuz.com/696755/cs786-lecture-13-may-14-2012-s.webp)