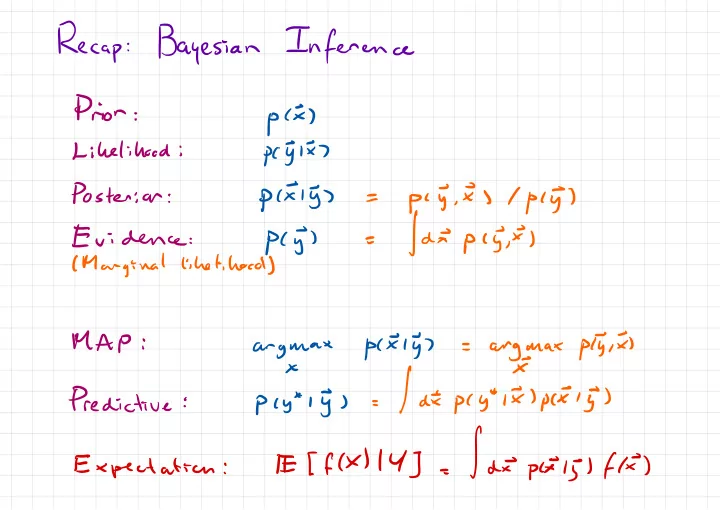

Recap

:Bayesian

Inference

Prior

:pix

) Likelihood :pc§lx±

Posterior : pcxly ) =piy.ES/pc5

) Evidence :pcj

) =|dEpc5¥ )

( Marginal likelihood ) MAP :- u

- anyynax

arggrax

plyix ) × Predictive :pcy*iy

) =|dEp(y*lI)pcEl5

) Expectation : El [ fk ) 14 ] = |dEpcxoljlfk

)