Multi-view Active Learning Ion Muslea University of Southern - PowerPoint PPT Presentation

Multi-view Active Learning Ion Muslea University of Southern California Outline Multi-view active learning Robust multi-view learning View validation as meta-learning Related Work Contributions Future work

Multi-view Active Learning Ion Muslea University of Southern California

Outline • Multi-view active learning • Robust multi-view learning • View validation as meta-learning • Related Work • Contributions • Future work

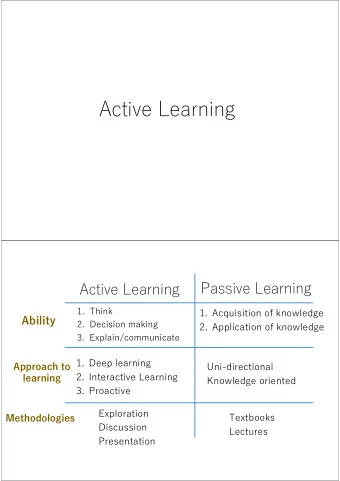

Background & Terminology • Inductive machine learning – algorithms that learn concepts from labeled examples • Active learning: minimize need for training data – detect & ask-user-to-label only most informative exs. • Multi-view learning ( MVL MVL ) – disjoint sets of features that are sufficient for learning • Speech recognition: sound vs. lip motion – previous multi-view learners are semi-supervised • exploit distribution of the unlabeled examples • boost accuracy by bootstrapping views from each other

Thesis of the Thesis Multi-view active learning maximizes the accuracy of the learned hypotheses while minimizing the amount of labeled training data.

Outline • Multi-view active learning – The intuition – The Co-Testing family of algorithms – Empirical evaluation • Robust multi-view learning • View validation as meta-learning • Related Work • Contributions • Future work

A Simple Multi-View Problem Salary • Features: – salary – office number 50K • Concept: Is Faculty ? – View-1 : salary > 50 K – View-2: office < 300 Office 300 GOAL: minimize amount of labeled data

Office ? ? Unlabeled Examples ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? Co-Testing ? Salary ? ? ? ? Office Labeled Examples Salary

Office ? Unlabeled Examples ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? Co-Testing ? Salary ? ? ? ? Office Labeled Examples Salary

Office ? Unlabeled Examples ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? Co-Testing ? Salary ? ? ? ? Office Labeled Examples Salary

The Co-Testing Family of Algorithms • REPEAT – Learn one hypothesis in each view – Query one of the contention points (CP) » • Algorithms differ by: – output hypothesis: winner-takes-all , majority / weighted vote – query selection strategy: • Naïve: randomly chosen CP • Conservative: equal confidence CP • Aggressive: maximum confidence CP »

When does Co-Testing work? Assumptions: • 1. Uncorrelated views for any <x 1 ,x 2 ,L> : given L , x 1 and x 2 are uncorrelated • views unlikely to make same mistakes => contention points • 2. Compatible views • perfect learning in both views • contention points are fixable mistakes • under these assumptions , there are classes of learning problems for which Co-Testing converges faster than single-view active learners

Experiments: four real-world domains Ad Parse Courses Wrapper Ad Parse Courses Wrapper IB C4.5 Naïve-Bayes Stalker IB C4.5 Naïve-Bayes Stalker Random Sampling Random Sampling [Kushmerick ‘99] [Marcu et al. ‘00] [Blum+Mitchell ‘98] [Kushmerick ‘00] Uncertainty Sampling Uncertainty Sampling - remove advertisements - learn shift-reduce parser that - discriminates between course - extract relevant Query-by-Committee Query-by-Committee -“is this image an ad?” converts Japanese discourse tree homepages and other pages data from Web pages Query-by-Boosting Query-by-Boosting into an equivalent English one Query-by-Bagging Query-by-Bagging Naïve Co-Testing Naïve Co-Testing Conservative Co-Testing Conservative Co-Testing Aggressive Co-Testing Aggressive Co-Testing wins works cannot-be-applied

Main Application: Wrapper Induction • Extract phone number : find its start & end … Hilton <p> Phone: <b> (211) 111-1111 </b> Fax: (211) 121-1… SkipTo ( Phone : <b> ) SkipTo ( </b> ) … Phone (toll free) : <i> (800) 171-1771 </i> Fax: (800) 777-1… SkipTo ( Phone ) SkipTo ( Html ) SkipTo ( Html )

Co-Testing for Wrapper Induction • Views: tokens before & after extract. point … Hilton <p> Phone: <b> (211) 111-1111 </b> Fax: <b> (211) … SkipTo ( Phone ) SkipTo ( <b> ) BackTo ( Fax ) BackTo ( ( Nmb ) …Motel 6 <p> Phone : <b> (311) 101-1110 </b> Fax: <b> (311) … …Motel 6 <p> Phone : <b> (311) 101-1110 </b> Fax: <b> (311) … … Phone (tool free) : <i> (800) 171-1771 </i> Fax: <b> (111) … … Phone (tool free) : <i> (800) 171-1771 </i> Fax: <b> (111) …

Results on 33 tasks: 2 rnd exs + queries Random sampling Tasks 20 15 10 5 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18+ 18+ Queries until 100% accuracy

Results on 33 tasks: 2 rnd exs + queries Naïve Co-Testing Random sampling Tasks 20 15 10 5 0 1 3 5 7 9 11 13 15 17 18+ Queries until 100% accuracy

Results on 33 tasks: 2 rnd exs + queries Aggressive Co-Testing Naïve Co-Testing Random sampling Tasks 20 15 10 5 0 18+ 1 3 5 7 9 11 13 15 17 Queries until 100% accuracy

Co-Testing vs. Single-View Sampling Aggressive Co-Testing Query-by-Bagging Tasks 25 20 15 10 5 0 18+ 1 3 5 7 9 11 13 15 17 Queries until 100% accuracy

First Contribution Co-Testing : multi-view active learning • Querying contention points • Converges faster than single-view � variety of domains & base learners

Outline • Multi-view active learning • Robust multi-view learning – motivation – Co-EMT = active + semi-supervised learning – robustness to assumption violations • View validation as meta-learning • Related Work • Contributions • Future work

Motivation • Active learning: – queries only the most informative examples – ignores all remaining (unlabeled) examples Semi-supervised learning (previous MVL MVL ): • few labeled + many unlabeled examples – • unlabeled examples: model examples’ distribution • use this model to boost accuracy of small training set • Best of both worlds: 1. Active: make queries 2. Semi-supervised: use remaining (unlabeled) exs.

Co-EMT = Co-Testing + Co-EM • Given: – views V 1 & V 2 Semi-supervised MVL MVL – L & U , sets of labeled & unlabeled examples - few labeled + many unlabeled exs • Co-Testing Co-EMT - uses unlabeled exs to bootstrap views from each other REPEAT - use Co-EM( L , U ) to learn h 1 and h 2 – use labeled examples in L to learn h 1 and h 2 ≠ – query contention point: h 1 ( u ) h 2 ( u )

The Co-EMT Synergy 1. Co-Testing boosts Co-EM : better examples – stand-alone Co-EM uses random examples Co-Testing provides more informative examples – 2. Co-EM helps Co-Testing : better hypotheses – stand-alone Co-Testing uses only labeled exs Co-EM also exploits unlabeled examples –

Two real-world domains Co-Training Co-Testing ADS 9 8 7 6 5 4 error rate (%) semi-supervised EM Co-EMT Co-EM COURSES 5.5 5 4.5 4 3.5 error rate (%)

Semi-supervised MVL MVL : bootstrapping views Task: is Web page course homepage ( + ) or not ( - ) ? V2 : words in hyperlinks V1 : words in pages … Spring teaching … … favorite class … … my favorite class …

Assumption: compatible, independent views

Incompatible views CS-511: Neural Nets … neural nets … Neural nets papers: … Neural nets papers: … … neural nets … … neural nets …

Correlated views: domain clumpiness A.I. clump Theory clump Systems clump Faculty clump Students clump Admin clump

A Controlled Experiment clumps per class 4 Co-EM Co-Training 2 EM 1 0 10 20 30 40 incompatibility (%)

Co-EMT is robust ! clumps per class Co-EMT 4 Co-EM Co-Training 2 EM 1 0 10 20 30 40 incompatibility (%)

Second Contribution Co-EMT : robust multi-view learning interleave active & semi-supervised MVL MVL •

Outline • Multi-view active learning • Robust multi-view learning • View validation as meta-learning – Motivation – Adaptive view validation – Empirical results • Related Work • Contributions • Future work

Motivation: Wrapper Induction Aggressive Co-Testing One inadequate view: In MVL MVL , the same views may be: Example: Domains - V1: 100% accurate 12 - V2: 53% accurate 10 8 6 • adequate for some tasks 4 2 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18+ • inadequate for other tasks Queries until 100% accuracy

The Need for View Validation • Not only for wrapper induction: • Speech recognition: sound vs. lip motion • Task-1: recognize Tom Brokaw ’s speech • Task-2: recognize Ozzy Osbourne ’s speech • ... • Web page classification: hyperlink vs. page words • Task-1: terrorism / economics news • Task-2: faculty / student homepage • ... • Solution: meta-learning • from past experiences, learn to … • … predict whether MVL MVL is adequate for new, unseen task

Meta-learner: Adaptive View Validation • GIVEN – labeled tasks [ Task 1 , L 1 ], [ Task 2 , L 2 ], …, [ Task n , L n ] • FOR EACH Task i DO – generate view validation example e i = < Meta-F1, Meta-F2, … , L i > • train C4.5 on e 1 , e 2 , … , e n For each new, unseen task use learned decision tree to predict whether MVL MVL is adequate for task.

Recommend

More recommend

Explore More Topics

Stay informed with curated content and fresh updates.