SLIDE 1

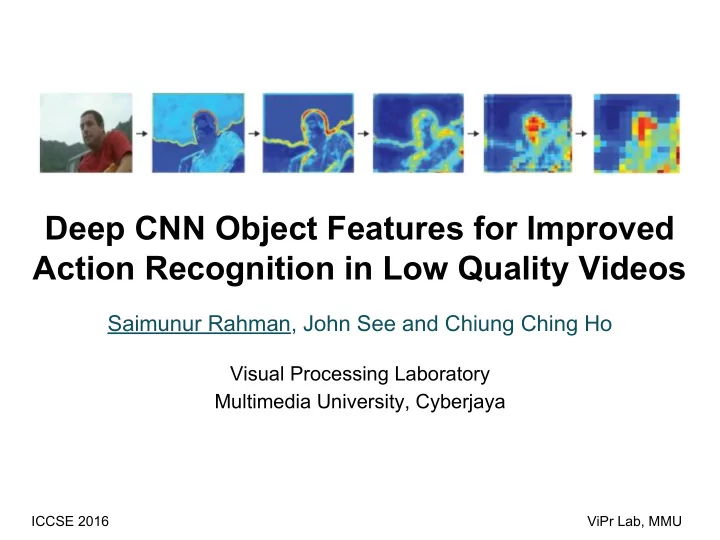

Deep CNN Object Features for Improved Action Recognition in Low Quality Videos

Saimunur Rahman, John See and Chiung Ching Ho

Visual Processing Laboratory Multimedia University, Cyberjaya

ICCSE 2016 ViPr Lab, MMU

Deep CNN Object Features for Improved Action Recognition in Low - - PowerPoint PPT Presentation

Deep CNN Object Features for Improved Action Recognition in Low Quality Videos Saimunur Rahman, John See and Chiung Ching Ho Visual Processing Laboratory Multimedia University, Cyberjaya ICCSE 2016 ViPr Lab, MMU At first, the overview of this

ICCSE 2016 ViPr Lab, MMU

2

3

4

Original Frame HOG Orgi. Res. CRF 40 CRF 50

5

6

7

8

VGG-16 CNN model Feature map in Conv. Layers

9

Sample low quality videos

Class-specific CRF values for UCF-11: http://saimunur.github.io/YouTube-LQ-CRFs.txt

10

11

12

13

14

15