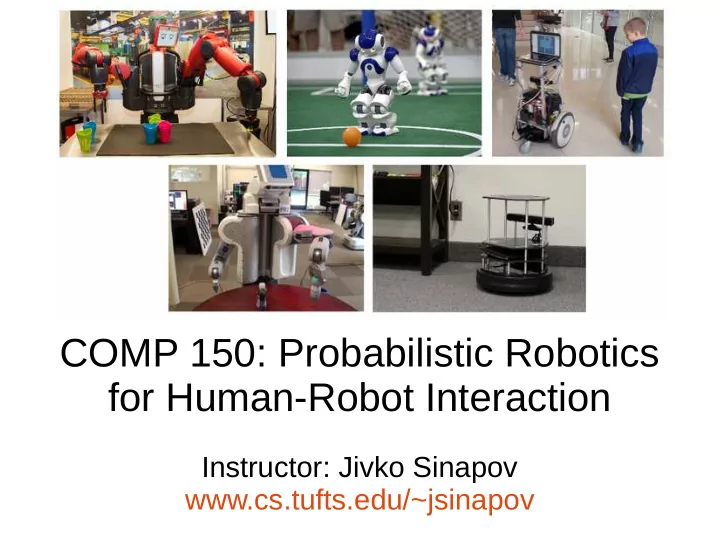

COMP 150: Probabilistic Robotics for Human-Robot Interaction - PowerPoint PPT Presentation

COMP 150: Probabilistic Robotics for Human-Robot Interaction Instructor: Jivko Sinapov www.cs.tufts.edu/~jsinapov Language Acquisition How would you describe this object? It is a small orange spray can My model of the word orange

COMP 150: Probabilistic Robotics for Human-Robot Interaction Instructor: Jivko Sinapov www.cs.tufts.edu/~jsinapov

Language Acquisition How would you describe this object? It is a small orange spray can My model of the word ‘orange’ has improved!

Something fun...

Announcements

Project Deadlines ● Project Presentations: Apr 23 and 25 ● Final Report + Deliverables: May 10 ● Deliverables: – Presentation slides + videos – Final Report (PDF) – Source code (link to github repositories)

Presentation Guidelines ● Length: – Individual projects: 5 minutes talk + 2 min for questions – Team projects: 8 minutes talk + 3 min for questions ● Practice! Time your presentation when you practice and use a timer during the actual presentation as well ● My advice: find another group and practice to each other ● Format: Google Slides (so that we don’t have to switch computers)

Language Acquisition How would you describe this object? It is a small orange spray can My model of the word ‘orange’ has improved!

The Turing Test

The Turing Test

The Turing Test

The First ChatBot (~1966)

ELIZA ● http://psych.fullerton.edu/mbirnbaum/psych101/ Eliza.htm

Discussion: what is missing from programs like ELIZA?

Natural Language Processing ● The study of algorithms and data structures used to manipulate text and text-like data ● Applications in information retrieval, web search, dialogue agents, text mining, etc. ● Traditionally, not concerned with connecting semantic representations to the real world

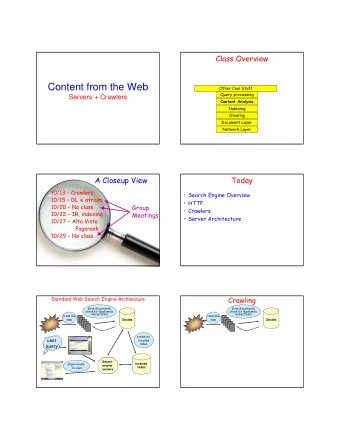

Example: Computing Parse Trees

Example: Document Classification https://abbyy.technology/_media/en:features:classification- scheme.png

Example: Word Embeddings https://image.slidesharecdn.com/introductiontowordembeddings-160405062343/95/a-simple-introduction-to-word-embeddings-5-638.jpg?cb=1494520542

The Symbol Grounding Problem “How can the semantic interpretation of a formal symbol system be made intrinsic to the system, rather than just parasitic on the meanings in our heads? How can the meanings of the meaningless symbol tokens, manipulated solely on the basis of their (arbitrary)shapes, be grounded in anything but other meaningless symbols?” - Steven Hamas, 1990

Deb Roy, “Grounding Language in the World: Schema Theory Meets Semiotics” (2005)

Circular Definitions

Grounding

Sensor Projections

Sensor Projections INPUT IMAGE Color Histogram

Transformer Projection

Transformer Projection Color Histogram Entropy of Histogram

Categorizer Entropy of Histogram “Multicolored”

Action Projector

Schemas for Actions

Schemas for Objects

Spatial Relations

Deb Roy’s Definition of Grounding ● “I define grounding as a causal-predictive cycle by which an agent maintains beliefs about its world.” (p. 8) ● “An agent’s basic grounding cycle cannot require mediation by another agent.” (p. 9) ● “An autonomous robot simply cannot afford to have a human in the loop interpreting sensory data on its behalf.” (p. 9)

● “Cyclic interactions between robots and their environment, when well designed, enable a robot to learn, verify, and use world knowledge to pursue goals. I believe we should extend this design philosophy to the domain of language and intentional communication.” (p. 5)

● “causality alone is not a sufficient basis for grounding beliefs. Grounding also requires prediction of the future with respect to the agent’s own actions.” (p. 10) ● “The problem with ignoring the predictive part of the grounding cycle has sometimes been called the ”homunculus problem”.”

Take Home Message Language should be grounded in terms of the robot’s own perceptual and sensorimotor capabilities

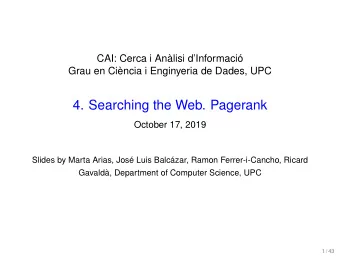

Thomason, J., Sinapov, J., Svetlik, M., Stone, P., and Mooney, R. (2016) Learning Multi-Modal Grounded Linguistic Semantics by Playing I, Spy In proceedings of the 2016 International Joint Conference on Artificial Intelligence (IJCAI)

Motivation: Grounded Language Learning Robot, fetch me the green empty bottle 39

Exploratory Behaviors in our Robot 40

Video 41

Video 42

Video 43

Sensorimotor Feature Extraction . . . . . . Joint Efforts (Haptics) Time 44

Sensorimotor Contexts proprio- haptics audio shape color VGG ception look grasp lift hold lower drop push press 45

Sensorimotor Contexts proprio- haptics audio shape color VGG ception look grasp lift hold lower drop push press 46

Feature Extraction: Color Object Segmentation Color Histogram (4 x 4 x 4 = 64 bins) 47

Feature Extraction: Shape 3D Object Point Cloud Histogram of Shape Features 48

Feature Extraction: Haptics Joint-Torque values for all joints Joint-Torque Features 49

Feature Extraction: Audio audio spectrogram Spectro-temporal Features 50

Feature Extraction: VGG 51

Feature Extraction: VGG 52

Data from a single exploratory trial proprio- haptics audio shape color VGG ception look grasp lift hold lower drop push press x 5 per object 53

Category Recognition Overview Interaction with Object Category Estimates Red? . . . Container? Empty? Sensorimotor Feature Category Extraction Recognition Models Sinapov, J., Schenck, C., and Stoytchev, A. (2014). Learning Relational Object Categories Using Behavioral Exploration and Multimodal Perception In the Proceedings of the 2014 IEEE International Conference on Robotics and Automation (ICRA) 54

Key Questions How can the robot learn object-related words from everyday human users? Do human users use non-visual object descriptors when referring to objects? 55

Object Exploration Dataset 32 common household and office items Each object was explored a total of 5 times with 7 different behaviors The robot perceived objects using the visual, auditory, and haptic sensory modalities Thomason, J., Sinapov, J., Svetlik, M., Stone, P., and Mooney, R. (2016). Learning Multi-Modal Grounded Linguistic Semantics by Playing I, Spy In proceedings of the 2016 International Joint Conference on Artificial Intelligence (IJCAI) 56

Our attempt: I-Spy game 57

Learning Words via Game-play Human: “an empty metallic aluminum container” 58

Semantic Parsing 59

Example Words for an Object 60

Learning Words via Game-play 61

Learning Words via Game-play Human: “a tall blue cylindrical container” 62

Learning Words via Game-play Robot: “open half-full container” 63

Asking Verification Questions 64

Results 65

F-measure improvement as WORD a result of adding non- visual modalities “can” 0.857 “tall” 0.516 “half-full” 0.463 . . . . . . . . “pink” 0 66

Summary of Experiment ● The robot learned over 80 words through interactive game play ● The robot's word representations were grounded in multiple behaviors and sensory modalities ● Future Work: – Active action selection when classifying a new object – Active action selection when learning a new words – Actively seek humans out for help with learning about objects 67

“Opportunistic” Active Learning Thomason, J., Padmakumar, A., Sinapov, J., Hart, J., Stone, P., and Mooney, R. (2017) Opportunistic Active Learning for Grounding Natural Language Descriptions In proceedings of the 1st Annual Conference on Robot Learning (CoRL 2017) 68

“Opportunistic” Active Learning Thomason, J., Padmakumar, A., Sinapov, J., Hart, J., Stone, P., and Mooney, R. (2017) Opportunistic Active Learning for Grounding Natural Language Descriptions In proceedings of the 1st Annual Conference on Robot Learning (CoRL 2017) 69

What actions should the robot perform when learning a new word? ● Baseline: perform all actions on a set of labeled objects and estimate which ones work well ● But can we do better? 70

Sensorimotor Word Embeddings Sinapov, J., Schenck, C., and Stoytchev, A. (2014). Learning Relational Object Categories Using Behavioral Exploration and Multimodal Perception In the Proceedings of the 2014 IEEE International Conference on Robotics and Automation (ICRA) 71

72

Sensorimotor Word Embeddings Sinapov, J., Schenck, C., and Stoytchev, A. (2014). Learning Relational Object Categories Using Behavioral Exploration and Multimodal Perception In the Proceedings of the 2014 IEEE International Conference on Robotics and Automation (ICRA) 73

Behavior Scores for Words 74

Word Embeddings Thomason, J., Sinapov, J., Stone, P., and Mooney, R. (2018) Guiding Exploratory Behaviors for Multi-Modal Grounding of Linguistic Descriptions In proceedings of the 32nd Conference of the Association for the Advancement of Artificial Intelligence (AAAI) 75

Recommend

More recommend

Explore More Topics

Stay informed with curated content and fresh updates.