Communication Lower Bounds for Statistical Estimation Problems via a - PowerPoint PPT Presentation

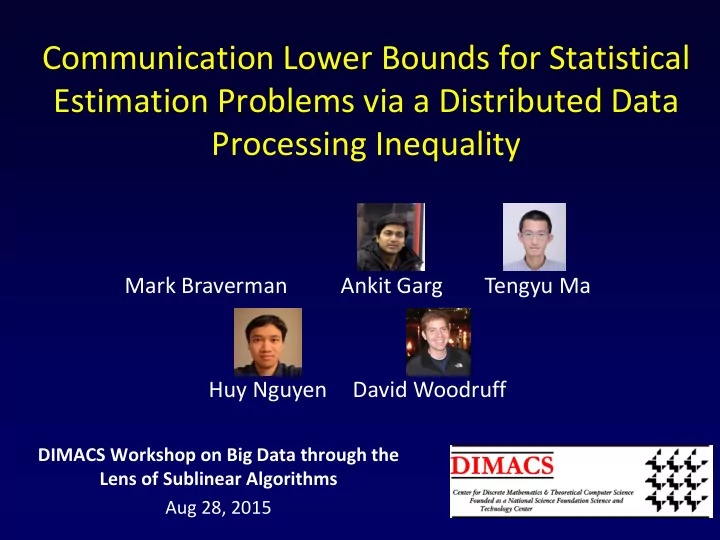

Communication Lower Bounds for Statistical Estimation Problems via a Distributed Data Processing Inequality Mark Braverman Ankit Garg Tengyu Ma Huy Nguyen David Woodruff DIMACS Workshop on Big Data through the Lens of Sublinear Algorithms

Communication Lower Bounds for Statistical Estimation Problems via a Distributed Data Processing Inequality Mark Braverman Ankit Garg Tengyu Ma Huy Nguyen David Woodruff DIMACS Workshop on Big Data through the Lens of Sublinear Algorithms Aug 28, 2015 1

Distributed mean estimation Big Data! Statistical estimation: Distributed Storage – Unknown parameter 𝜄 . and Processing small small – Inputs to machines: i.i.d. data data data points ∼ 𝐸 𝜄 . . – Output estimator 𝜄 Blackboard Objectives: – Low communication 𝐷 = Π . – Small loss 2 . − 𝜄 𝑆 ≔ 𝔽 𝜄 𝜄 2

Goal: Distributed sparse Gaussian estimate mean estimation (𝜄 1 , … , 𝜄 𝑒 ) • Ambient dimension 𝑒 . • Sparsity parameter 𝑙 : 𝜄 0 ≤ 𝑙 . • Number of machines 𝑛 . • Each machine holds 𝑜 samples. • Standard deviation 𝜏 . • Thus each sample is a vector (𝑢) ∼ 𝒪 𝜄 1 , 𝜏 2 , … , 𝒪 𝜄 𝑒 , 𝜏 2 ∈ ℝ 𝑒 𝑌 𝑘 3

Goal: Higher value makes estimate estimation: (𝜄 1 , … , 𝜄 𝑒 ) • Ambient dimension 𝑒 . harder • Sparsity parameter 𝑙 : 𝜄 0 ≤ 𝑙 . harder • Number of machines 𝑛 . easier* • Each machine holds 𝑜 samples. easier • Standard deviation 𝜏 . harder • Thus each sample is a vector (𝑢) ∼ 𝒪 𝜄 1 , 𝜏 2 , … , 𝒪 𝜄 𝑒 , 𝜏 2 ∈ ℝ 𝑒 𝑌 𝑘 4

Distributed sparse Gaussian mean estimation Statistical limit • Main result: if Π = C , then 𝑆 ≥ Ω max 𝜏 2 𝑒𝑙 𝑜𝐷 , 𝜏 2 𝑙 • 𝑒 – dim 𝑜𝑛 • 𝑙 – sparsity • 𝑛 – machine • Tight up to a log 𝑒 factor • 𝑜 – samp. each • 𝜏 – deviation [GMN14]. Up to a const. • 𝑆 – sq. loss factor in the dense case. • For optimal performance, 𝐷 ≳ 𝑛𝑒 (not 𝑛𝑙 ) is needed! 5

Prior work (partial list) • [Zhang-Duchi-Jordan- Wainwright’13]: the case when 𝑒 = 1 and general communication; and the dense case for simultaneous-message protocols. • [Shamir’14]: Implies the result for 𝑙 = 1 in a restricted communication model. • [Duchi-Jordan-Wainwright- Zhang’14, Garg -Ma- Nguyen’14]: the dense case (up to logarithmic factors). • A lot of recent work on communication-efficient distributed learning. 6

Reduction from Gaussian mean detection 𝜏 2 𝑒𝑙 𝜏 2 𝑙 • 𝑆 ≥ Ω max 𝑜𝐷 , 𝑜𝑛 • Gaussian mean detection – A one-dimensional problem. – Goal: distinguish between 𝜈 0 = 𝒪 0, 𝜏 2 and 𝜈 1 = 𝒪 𝜀, 𝜏 2 . – Each player gets 𝑜 samples. 7

𝜏 2 𝑒𝑙 𝑜𝐷 , 𝜏 2 𝑙 • Assume 𝑆 ≪ max 𝑜𝑛 • Distinguish between 𝜈 0 = 𝒪 0, 𝜏 2 and 𝜈 1 = 𝒪 𝜀, 𝜏 2 . 1 16 𝑙𝜀 2 in the • Theorem: If can attain 𝑆 ≤ estimation problem using 𝐷 communication, then we can solve the detection problem at ∼ 𝐷/𝑒 min- information cost. • Using 𝜀 2 ≪ 𝜏 2 𝑒/(𝐷 𝑜) , get detection using 𝐽 ≪ 𝜏 2 𝑜 𝜀 2 min-information cost. 8

The detection problem • Distinguish between 𝜈 0 = 𝒪 0,1 and 𝜈 1 = 𝒪 𝜀, 1 . • Each player gets 𝑜 samples. 1 • Want this to be impossible using 𝐽 ≪ 𝑜 𝜀 2 min-information cost. 9

The detection problem • Distinguish between 𝜈 0 = 𝒪 0,1 and 𝜈 1 = 𝒪 𝜀, 1 . • Distinguish between 𝜈 0 = 𝒪 0, 1 𝑜 and 𝜈 1 = 𝒪 𝜀, 1 𝑜 . • Each player gets 𝑜 samples. one sample. 1 • Want this to be impossible using 𝐽 ≪ 𝑜 𝜀 2 min-information cost. 10

The detection problem • By scaling everything by 𝑜 (and replacing 𝜀 with 𝜀 𝑜 ). • Distinguish between 𝜈 0 = 𝒪 0,1 and 𝜈 1 = 𝒪 𝜀, 1 . • Each player gets one sample. • Want this to be impossible using 𝐽 ≪ 1 𝜀 2 min-information cost. Tight (for 𝑛 large enough, otherwise task impossible) 11

Information cost 𝜈 𝑤 = 𝒪 𝜀𝑊, 1 𝑊 𝑌 1 ∼ 𝜈 𝑤 𝑌 2 ∼ 𝜈 𝑤 𝑌 𝑛 ∼ 𝜈 𝑤 Blackboard Π 𝐽𝐷 𝜌 : = 𝐽(Π; 𝑌 1 𝑌 2 … 𝑌 𝑛 ) 12

Min-Information cost 𝜈 𝑊 = 𝒪 𝜀𝑊, 1 𝑊 𝑌 1 ∼ 𝜈 𝑤 𝑌 2 ∼ 𝜈 𝑤 𝑌 𝑛 ∼ 𝜈 𝑤 Blackboard Π 𝑛𝑗𝑜𝐽𝐷 𝜌 ≔ min 𝑤∈{0,1} 𝐽(Π; 𝑌 1 𝑌 2 … 𝑌 𝑛 |𝑊 = 𝑤) 13

Min-Information cost 𝑛𝑗𝑜𝐽𝐷 𝜌 ≔ min 𝑤∈{0,1} 𝐽(Π; 𝑌 1 𝑌 2 … 𝑌 𝑛 |𝑊 = 𝑤) 1 • We will want this quantity to be Ω 𝜀 2 . • Warning: it is not the same thing as 𝐽(Π; 𝑌 1 𝑌 2 … 𝑌 𝑛 |𝑊)= 𝔽 𝑤∼𝑊 𝐽(Π; 𝑌 1 𝑌 2 … 𝑌 𝑛 |𝑊 = 𝑤) because one case can be much smaller than the other. • In our case, the need to use 𝑛𝑗𝑜𝐽𝐷 instead of 𝐽𝐷 happens because of the sparsity. 14

Strong data processing inequality 𝜈 𝑤 = 𝒪 𝜀𝑊, 1 𝑊 𝑌 1 ∼ 𝜈 𝑤 𝑌 2 ∼ 𝜈 𝑤 𝑌 𝑛 ∼ 𝜈 𝑤 Blackboard Π Fact: Π ≥ 𝐽 Π; 𝑌 1 𝑌 2 … 𝑌 𝑛 = 𝐽(Π; 𝑌 𝑗 |𝑌 <𝑗 ) 𝑗 15

Strong data processing inequality • 𝜈 𝑤 = 𝒪 𝜀𝑊, 1 ; suppose 𝑊 ∼ 𝐶 1/2 . • For each 𝑗 , 𝑊 − 𝑌 𝑗 − Π is a Markov chain. • Intuition: “ 𝑌 𝑗 contains little information about 𝑊 ; no way to learn this information except by learning a lot about 𝑌 𝑗 ”. • Data processing: 𝐽 𝑊; Π ≤ 𝐽 𝑌 𝑗 ; Π . • Strong Data Processing: 𝐽 𝑊; Π ≤ 𝛾 ⋅ 𝐽 𝑌 𝑗 ; Π for some 𝛾 = 𝛾(𝜈 0 , 𝜈 1 ) < 1 . 16

Strong data processing inequality • 𝜈 𝑤 = 𝒪 𝜀𝑊, 1 ; suppose 𝑊 ∼ 𝐶 1/2 . • For each 𝑗 , 𝑊 − 𝑌 𝑗 − Π is a Markov chain. • Strong Data Processing: 𝐽 𝑊; Π ≤ 𝛾 ⋅ 𝐽 𝑌 𝑗 ; Π for some 𝛾 = 𝛾(𝜈 0 , 𝜈 1 ) < 1 . • In this case ( 𝜈 0 = 𝒪 0,1 , 𝜈 1 = 𝒪 𝜀, 1 ): 𝛾 𝜈 0 , 𝜈 1 ∼ 𝐽 𝑊; sign 𝑌 𝑗 𝐽 𝑌 𝑗 ; sign(𝑌 𝑗 ) ∼ 𝜀 2 17

“Proof” • 𝜈 𝑤 = 𝒪 𝜀𝑊, 1 ; suppose 𝑊 ∼ 𝐶 1/2 . • Strong Data Processing: 𝐽 𝑊; Π ≤ 𝜀 2 ⋅ 𝐽 𝑌 𝑗 ; Π • We know 𝐽 𝑊; Π = Ω(1) . ≥ 1 Π ≥ 𝐽 Π; 𝑌 1 𝑌 2 … 𝑌 𝑛 ≳ 𝐽 Π; 𝑌 𝑗 𝜀 2 … 𝑗 "𝐽𝑜𝑔𝑝 Π 𝑑𝑝𝑜𝑤𝑓𝑧𝑡 𝑏𝑐𝑝𝑣𝑢 𝑊 𝑢ℎ𝑠𝑝𝑣ℎ 𝑞𝑚𝑏𝑧𝑓𝑠 𝑗" ≳ 𝑗 1 1 𝜀 2 𝐽 𝑊; Π = Ω Q.E.D! 𝜀 2 18

Issues with the proof • The right high level idea. • Two main issues: – Not clear how to deal with additivity over coordinates. – Dealing with 𝑛𝑗𝑜𝐽𝐷 instead of 𝐽𝐷 . 19

If the picture were this… 𝜈 𝑤 = 𝒪 𝜀𝑊, 1 𝑊 𝑌 1 ∼ 𝜈 𝑤 𝑌 2 ∼ 𝜈 0 𝑌 𝑛 ∼ 𝜈 0 Blackboard Π Then indeed 𝐽 Π; 𝑊 ≤ 𝜀 2 ⋅ 𝐽 Π; 𝑌 1 . 20

Hellinger distance • Solution to additivity: using Hellinger 2 𝑒𝑦 𝑔 𝑦 − 𝑦 distance Ω • Following from [Jayram’09]. ℎ 2 Π 𝑊=0 , Π 𝑊=1 ∼ 𝐽 𝑊; Π = Ω 1 • ℎ 2 Π 𝑊=0 , Π 𝑊=1 decomposes into 𝑛 scenarios as above using the fact that Π is a protocol. 21

𝑛𝑗𝑜𝐽𝐷 • Dealing with 𝑛𝑗𝑜𝐽𝐷 is more technical. Recall: • 𝑛𝑗𝑜𝐽𝐷 𝜌 ≔ min 𝑤∈{0,1} 𝐽(Π; 𝑌 1 𝑌 2 … 𝑌 𝑛 |𝑊 = 𝑤) • Leads to our main technical statement: “Distributed Strong Data Processing Inequality” Theorem: Suppose Ω 1 ⋅ 𝜈 0 ≤ 𝜈 1 ≤ 𝑃 1 ⋅ 𝜈 0 , and let 𝛾(𝜈 0 , 𝜈 1 ) be the SDPI constant. Then ℎ 2 Π 𝑊=0 , Π 𝑊=1 ≤ 𝑃 𝛾 𝜈 0 , 𝜈 1 ⋅ 𝑛𝑗𝑜𝐽𝐷(𝜌) 22

Putting it together Theorem: Suppose Ω 1 ⋅ 𝜈 0 ≤ 𝜈 1 ≤ 𝑃 1 ⋅ 𝜈 0 , and let 𝛾(𝜈 0 , 𝜈 1 ) be the SDPI constant. Then ℎ 2 Π 𝑊=0 , Π 𝑊=1 ≤ 𝑃 𝛾 𝜈 0 , 𝜈 1 ⋅ 𝑛𝑗𝑜𝐽𝐷(𝜌) • With 𝜈 0 = 𝒪 0,1 , 𝜈 1 = 𝒪 𝜀, 1 , 𝛾 ∼ 𝜀 2 , we get Ω 1 = ℎ 2 Π 𝑊=0 , Π 𝑊=1 ≤ 𝜀 2 ⋅ 𝑛𝑗𝑜𝐽𝐷(𝜌) 1 • Therefore, 𝑛𝑗𝑜𝐽𝐷 𝜌 = Ω 𝜀 2 . 23

Essential! Putting it together Theorem: Suppose Ω 1 ⋅ 𝜈 0 ≤ 𝜈 1 ≤ 𝑃 1 ⋅ 𝜈 0 , and let 𝛾(𝜈 0 , 𝜈 1 ) be the SDPI constant. Then ℎ 2 Π 𝑊=0 , Π 𝑊=1 ≤ 𝑃 𝛾 𝜈 0 , 𝜈 1 ⋅ 𝑛𝑗𝑜𝐽𝐷(𝜌) • With 𝜈 0 = 𝒪 0,1 , 𝜈 1 = 𝒪 𝜀, 1 • Ω 1 ⋅ 𝜈 0 ≤ 𝜈 1 ≤ 𝑃 1 ⋅ 𝜈 0 fails!! • Need an additional truncation step. Fortunately, the failure happens far in the tails. 24

Summary “Only get 𝜀 2 bits Hellinger Distributed distance toward detection sparse linear per bit of 𝑛𝑗𝑜𝐽𝐷 ” ⇒ regression Strong data 1 an 𝜀 2 lower bound processing Reduction [ZDJW’13] Gaussian mean Sparse Gaussian A direct sum detection ( 𝑜 → 1 ) mean estimation argument sample ( 𝑛𝑗𝑜𝐽𝐷 ) 25

Distributed sparse linear regression • Each machine gets 𝑜 data of the form (𝐵 𝑘 , 𝑧 𝑘 ) , where 𝑧 𝑘 = 𝐵 𝑘 , 𝜄 + 𝑥 𝑘 , 𝑥 𝑘 ∼ 𝒪 0, 𝜏 2 • Promised that 𝜄 is 𝑙 -sparse: 𝜄 0 ≤ 𝑙 . • Ambient dimension 𝑒 . 2 . − 𝜄 • Loss 𝑆 = 𝔽 𝜄 • How much communication to achieve statistically optimal loss? 26

Recommend

More recommend

Explore More Topics

Stay informed with curated content and fresh updates.