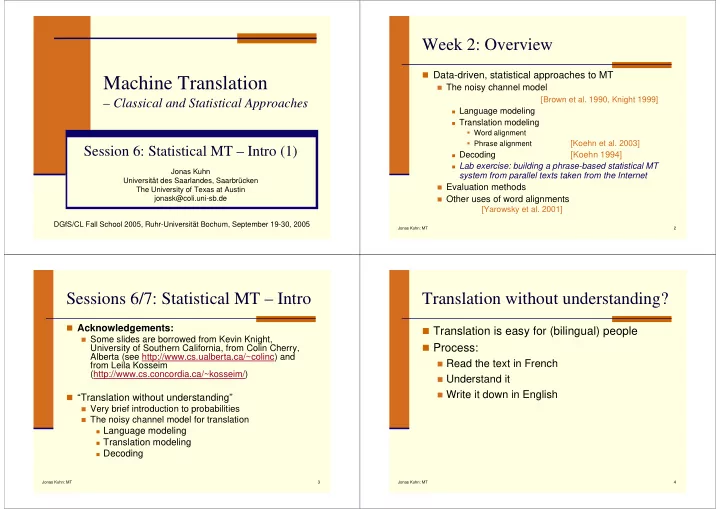

Machine Translation

– Classical and Statistical Approaches

Session 6: Statistical MT – Intro (1)

Jonas Kuhn Universität des Saarlandes, Saarbrücken The University of Texas at Austin jonask@coli.uni-sb.de DGfS/CL Fall School 2005, Ruhr-Universität Bochum, September 19-30, 2005

Jonas Kuhn: MT 2

Week 2: Overview

Data-driven, statistical approaches to MT

The noisy channel model

[Brown et al. 1990, Knight 1999]

Language modeling Translation modeling

Word alignment Phrase alignment

[Koehn et al. 2003]

Decoding

[Koehn 1994]

Lab exercise: building a phrase-based statistical MT

system from parallel texts taken from the Internet

Evaluation methods Other uses of word alignments

[Yarowsky et al. 2001]

Jonas Kuhn: MT 3

Sessions 6/7: Statistical MT – Intro

Acknowledgements:

Some slides are borrowed from Kevin Knight,

University of Southern California, from Colin Cherry, Alberta (see http://www.cs.ualberta.ca/~colinc) and from Leila Kosseim (http://www.cs.concordia.ca/~kosseim/) “Translation without understanding”

Very brief introduction to probabilities The noisy channel model for translation

Language modeling Translation modeling Decoding

Jonas Kuhn: MT 4

Translation without understanding?

Translation is easy for (bilingual) people Process:

Read the text in French Understand it Write it down in English