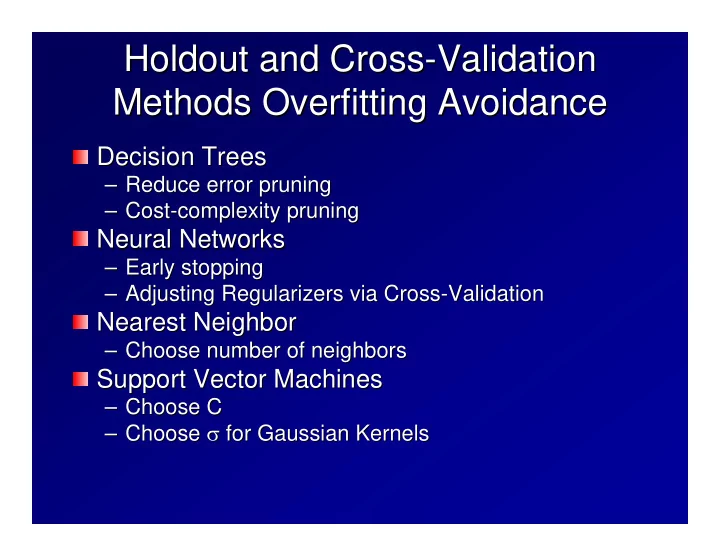

Holdout and Cross Holdout and Cross-

- Validation

Validation Methods Overfitting Avoidance Methods Overfitting Avoidance

Decision Trees Decision Trees

– – Reduce error pruning Reduce error pruning – – Cost Cost-

- complexity pruning

complexity pruning

Neural Networks Neural Networks

– – Early stopping Early stopping – – Adjusting Regularizers via Cross Adjusting Regularizers via Cross-

- Validation