Advanced Natural Language Processing: What is Natural Language - PowerPoint PPT Presentation

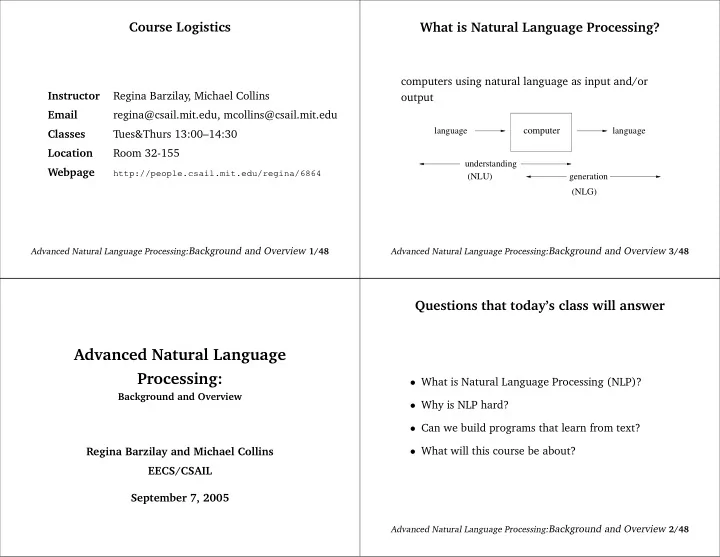

Course Logistics What is Natural Language Processing? computers using natural language as input and/or Instructor Regina Barzilay, Michael Collins output Email regina@csail.mit.edu, mcollins@csail.mit.edu computer language language

Course Logistics What is Natural Language Processing? computers using natural language as input and/or Instructor Regina Barzilay, Michael Collins output Email regina@csail.mit.edu, mcollins@csail.mit.edu computer language language Classes Tues&Thurs 13:00–14:30 Location Room 32-155 understanding Webpage (NLU) generation http://people.csail.mit.edu/regina/6864 (NLG) Advanced Natural Language Processing: Background and Overview 1/48 Advanced Natural Language Processing: Background and Overview 3/48 Questions that today’s class will answer Advanced Natural Language Processing: • What is Natural Language Processing (NLP)? Background and Overview • Why is NLP hard? • Can we build programs that learn from text? • What will this course be about? Regina Barzilay and Michael Collins EECS/CSAIL September 7, 2005 Advanced Natural Language Processing: Background and Overview 2/48

Information Extraction Transcript Segmentation 10TH DEGREE is a full service advertising agency specializing in direct and in- teractive marketing. Located in Irvine CA, 10TH DEGREE is looking for an As- sistant Account Manager to help manage and coordinate interactive marketing initiatives for a marquee automative account. Experience in online marketing, automative and/or the advertising field is a plus. Assistant Account Manager Re- sponsibilities Ensures smooth implementation of programs and initiatives Helps manage the delivery of projects and key client deliverables . . . Compensation: $50,000-$80,000 Hiring Organization: 10TH DEGREE INDUSTRY Advertising POSITION Assistant Account Manager LOCATION Irvine, CA COMPANY 10TH DEGREE SALARY $50,000-$80,000 Advanced Natural Language Processing: Background and Overview 5/48 Advanced Natural Language Processing: Background and Overview 7/48 Google Translation Information Extraction • Goal: Map a document collection to structured database • Motivation: – Complex searches (“Find me all the jobs in advertising paying at least $50,000 in Boston”) – Statistical queries (“Does the number of jobs in accounting increases over the years?”) Advanced Natural Language Processing: Background and Overview 4/48 Advanced Natural Language Processing: Background and Overview 6/48

Dialogue Systems Ambiguity “At last, a computer that understands you like your mother” User : I need a flight from Boston to Washington, 1. (*) It understands you as well as your mother arriving by 10 pm. understands you System : What day are you flying on? 2. It understands (that) you like your mother User : Tomorrow System : Returns a list of flights 3. It understands you as well as it understands your mother 1 and 3: Does this mean well, or poorly? Advanced Natural Language Processing: Background and Overview 9/48 Advanced Natural Language Processing: Background and Overview 11/48 Text Summarization Why is NLP Hard? [ example from L.Lee ] “At last, a computer that understands you like your mother” Advanced Natural Language Processing: Background and Overview 8/48 Advanced Natural Language Processing: Background and Overview 10/48

Ambiguity at Many Levels Ambiguity at Many Levels At the semantic (meaning) level: At the syntactic level: Two definitions of “mother” VP VP • a woman who has given birth to a child V NP S V S • a stringy slimy substance consisting of yeast cells and bacteria; is added to cider or wine to produce understands you like your mother [does] understands [that] you like your mother vinegar Different structures lead to different interpretations. This is an instance of word sense ambiguity Advanced Natural Language Processing: Background and Overview 13/48 Advanced Natural Language Processing: Background and Overview 15/48 Ambiguity at Many Levels More Syntactic Ambiguity VP VP At the acoustic level (speech recognition): V NP V NP PP 1. “ . . . a computer that understands you like your DET N list mother” list all on Tuesday flights N PP all 2. “ . . . a computer that understands you lie cured mother” flights on Tuesday Advanced Natural Language Processing: Background and Overview 12/48 Advanced Natural Language Processing: Background and Overview 14/48

Ambiguity at Many Levels Case study: Determiner Placement Task : Automatically place determiners ( a,the,null ) in a text At the discourse (multi-clause) level: • Alice says they’ve built a computer that understands Scientists in United States have found way of turning lazy monkeys you like your mother into workaholics using gene therapy. Usually monkeys work hard only when they know reward is coming, but animals given this treatment • But she . . . did their best all time. Researchers at National Institute of Mental Health near Washington DC, led by Dr Barry Richmond, have now de- . . . doesn’t know any details veloped genetic treatment which changes their work ethic markedly. . . . doesn’t understand me at all ”Monkeys under influence of treatment don’t procrastinate,” Dr Rich- mond says. Treatment consists of anti-sense DNA - mirror image of This is an instance of anaphora, where she co-referees to piece of one of our genes - and basically prevents that gene from work- some other discourse entity ing. But for rest of us, day when such treatments fall into hands of our bosses may be one we would prefer to put off. Advanced Natural Language Processing: Background and Overview 17/48 Advanced Natural Language Processing: Background and Overview 19/48 More Word Sense Ambiguity Knowledge Bottleneck in NLP We need: • Knowledge about language At the semantic (meaning) level: • Knowledge about the world • They put money in the bank Possible solutions: = buried in mud? • Symbolic approach: Encode all the required • I saw her duck with a telescope information into computer • Statistical approach: Infer language properties from language samples Advanced Natural Language Processing: Background and Overview 16/48 Advanced Natural Language Processing: Background and Overview 18/48

Symbolic Approach: Determiner Does it work? Placement What categories of knowledge do we need: • Implementation • Linguistic knowledge: – Corpus: training — first 21 sections of the Wall Street Journal (WSJ) corpus, testing – the 23th section – Static knowledge: number, countability, . . . – Prediction accuracy: 71.5% – Context-dependent knowledge: co-reference, . . . • The results are not great, but surprisingly high for • World knowledge: such a simple method – Uniqueness of reference ( the current president of the US ), type of noun ( newspaper vs. magazine ), situational associativity – A large fraction of nouns in this corpus always between nouns ( the score of the football game ), . . . appear with the same determiner “the FBI”,“the defendant”, . . . Hard to manually encode this information! Advanced Natural Language Processing: Background and Overview 21/48 Advanced Natural Language Processing: Background and Overview 23/48 Relevant Grammar Rules Statistical Approach: Determiner Placement • Determiner placement is largely determined by: Naive approach: – Type of noun (countable, uncountable) • Collect a large collection of texts relevant to your domain (e.g., – Reference (specific, generic) newspaper text) – Information value (given, new) • For each noun seen during training, compute its probability to – Number (singular, plural) take a certain determiner p ( determiner | noun ) = freq ( noun,determiner ) • However, many exceptions and special cases play a freq ( noun ) (assuming freq ( noun ) > 0 ) role: • Given a new noun, select a determiner with the highest – The definite article is used with newspaper titles ( The Times ), likelihood as estimated on the training corpus but zero article in names of magazines and journals ( Time ) Advanced Natural Language Processing: Background and Overview 20/48 Advanced Natural Language Processing: Background and Overview 22/48

Classification Approach Basic NLP Problem: Tagging • Learn a function from X → Y (in the previous • Naive solution: for each word, determine its tag example, Y = { “ the ′′ , ′′ a ′′ , null } ) independently • Assume there is some distribution D ( x, y ) , where • Desired alternative: take into account dependencies x ∈ X , and y ∈ Y . Our training sample is drawn among different predictions from D ( x, y ) . – Classification is suboptimal • Attempt to explicitly model the distribution D ( X, Y ) – We will model tagging as a mapping from a and D ( X | Y ) string to a tagged sequence Advanced Natural Language Processing: Background and Overview 25/48 Advanced Natural Language Processing: Background and Overview 27/48 Determiner Placement as Classification Basic NLP Problem: Tagging • Prediction: “the”, “a”, “null” • Representation of the problem: Task: Label each word in a sentence with its appropriate – plural? (yes, no) part of speech (POS) – first appearance in text? (yes, no) Time/Noun flies/Verb like/Preposition an/Determiner – noun (members of the vocabulary set) arrow/Noun Noun plural? first appearance determiner defendant no yes the Word Noun Verb Preposition cars yes no null flies 21 23 0 FBI no no the like 10 30 21 concert no yes a Goal : Learn classification function that can predict unseen examples Advanced Natural Language Processing: Background and Overview 24/48 Advanced Natural Language Processing: Background and Overview 26/48

Recommend

More recommend

Explore More Topics

Stay informed with curated content and fresh updates.